特定目标情感分析(Target-Specific Sentiment Analysis, TSSA)是自然语言处理领域的经典任务, 旨在通过对文本语义的挖掘和分析来判断其所表达的情感极性. 对于该任务, 给定一个完整的句子和其包含的目标词, 应推测出该句包含的各个目标词的情感极性(包括积极情感、消极情感、中性). 例如, 在句子“The menu is limited but almost all of the dishes are excellent.”中, 有两个目标词“menu”和“dishes”, 特定目标情感分析任务的目的就是要分析出目标词“menu”的情感极性是消极的, 目标词“dishes”的情感极性是积极的.

特定目标情感分析是一种细粒度的情感分析任务, 在国内外有诸多成果涌现. 该任务的研究方法一般分为传统机器学习方法和深度学习方法. 传统机器学习方法使用大量人工设计的特征集合来提高模型性能, 工作量较大. 与传统机器学习方法相比, 深度学习方法能自动提取文本语义特征, 在特定目标情感分析领域取得快速发展. 在LSTM[1]的基础上, 研究者们引入了注意力机制来改善长句表达能力欠佳的问题, 取得良好效果. 虽然注意力机制能捕捉更多的句子情感信息, 但当前研究多采用简单注意力机制, 当句子中情感词为多词词组时, 简单的注意力机制不能有效提取词组整体的语义特征, 容易引起歧义造成模型错判, 而引入短语级别语义特征能有效改善这一问题.

基于此, 本文提出了融合短语特征的多注意力网络(Phrase-EnabledMulti-Attention Network, PEMAN), 通过引入短语级别的语义表示, 实现多粒度特征融合的多注意力网络来解决上述问题, 并使用该模型在SemEval2014[2]的Laptop和Restaurant两个数据集上进行实验. 结果表明, 本文提出的PEMAN模型相比基线模型有一定提高, 准确率分别达到74.9%和80.6%.

2 相关工作传统机器学习方法利用情感词典、语句分析等获得句子特征, 然后利用分类器进行情感预测. 例如, Vo等[3]提出基于推特语料的情感分析模型, 其利用情感词典、多个词嵌入向量来提取语料的语义特征, 使得模型的准确率有一定提高. Kiritchenko等[4]利用词袋模型、情感词典以及语义解析来构建特征, 通过训练支持向量机分类器(Support Vector Machine, SVM)来进行情感分类. 以上方法表现不错, 但其效果依赖于复杂的特征抽取和设计工作, 需要耗费大量人力物力.

近年来, 越来越多的学者将深度学习方法用在特定目标情感分析任务中. Tang等[5]提出TD-LSTM、TC-LSTM两个模型, 通过两个LSTM分别对特定目标的上下文进行建模, 以获得更好的文本表示. Wang等[6]提出基于注意力机制的分类模型, 引入注意力机制捕获编码后的句子表示中的重要信息. Tang等[7]提出了一个深度记忆网络模型, 其通过线性方式组合了多个收集目标特征的注意力计算层, 以提高注意力网络的准确性. Ma等[8]使用两个注意力网络计算特定目标和上下文的语义表示并构建其交互表示, 以此来进行情感分类. Chen等[9]提出利用循环注意力结构获得多层句子特征, 并将其通过GRU非线性组合起来, 使模型具有更好的表达能力. Huang等[10]利用叠加注意力机制对句子和目标词的特征做交互计算, 提高了模型的准确率.

在特定目标情感分析任务的已有研究工作中, 当特定目标所对应的情感词为多词的时候, 可能会因为情感词语义融合不当而导致错误情况. 例如, 在句子“Great food andthe service was not bad.”中, 对于目标词“service”来说, 表达其情感倾向的是“not bad”这个情感词词组, 所表达的是积极情感. 在遇到上述例子时, 以往的模型可能会聚焦于“bad”这个单词而预测出负面情感, 造成分类的错误. 针对以上问题, 本文提出PEMAN模型, 通过引入短语级别的语义表示, 获取更丰富的句子语义表示, 实现多注意力网络的特征融合, 以解决注意力分散的问题.

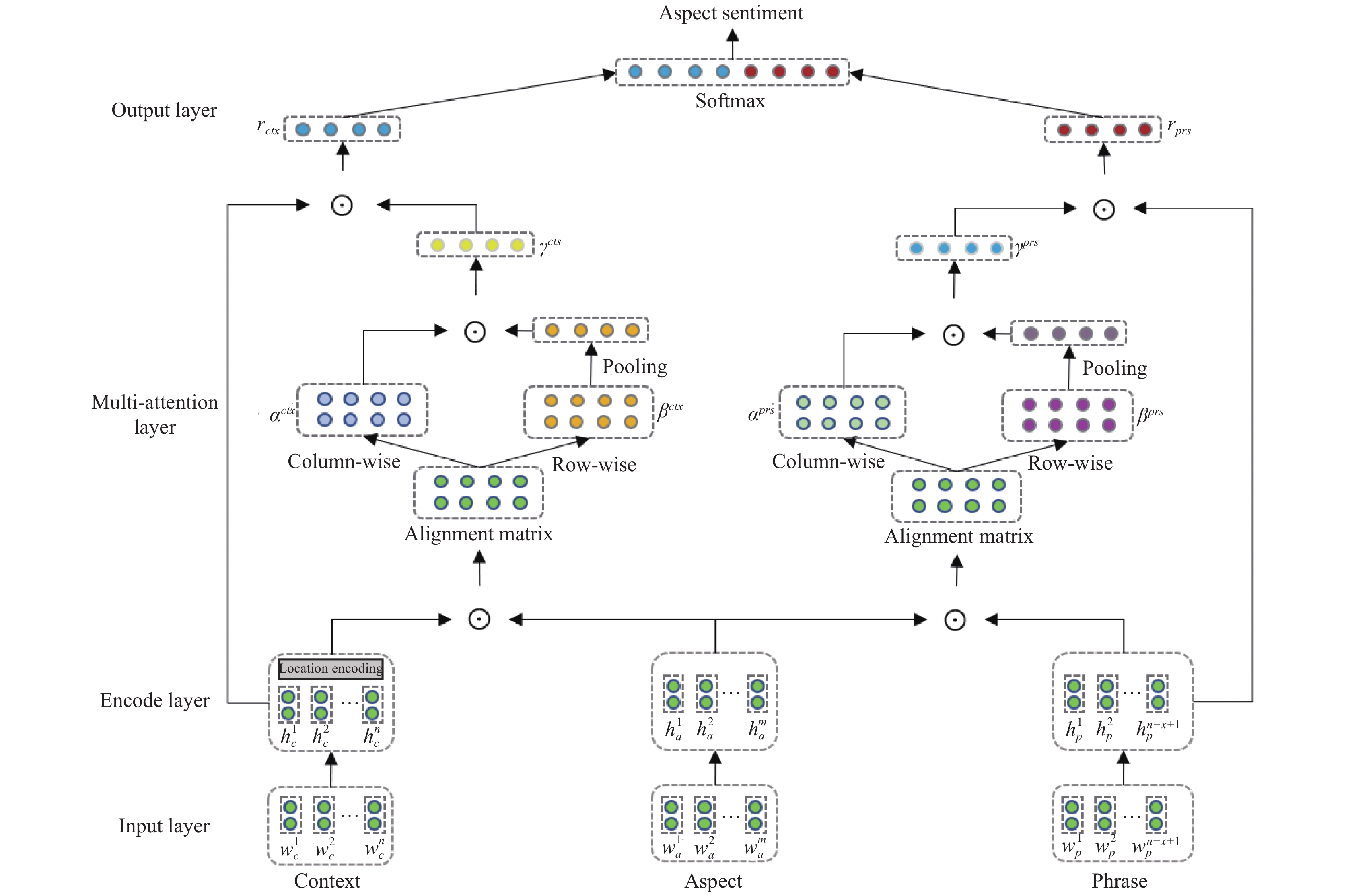

3 PEMAN模型本文提出的融合短语特征的多注意力网络PEMAN模型的整体框架图如图1所示, 其结构主要由输入层、编码层、多注意力层、输出层等部分组成:

(1)输入层: 对模型输入部分做处理, 进行向量嵌入操作.

(2)编码层: 使用Bi-LSTM[11]对输入内容进行编码, 并嵌入位置信息.

(3)多注意力层: 使用两个注意力交互矩阵对隐层状态输出进行计算, 得到最终的语义表示.

(4)输出层: 使用多注意力层输出的语义表示进行情感分类.

3.1 输入层本文模型的输入共有3个部分: 句子表示、目标词表示、短语集合表示. 给定一个数据集中长度为n的句子

| $C = [W_c^1;W_c^2;\cdots;W_c^n] \in {R^{n \times d}}$ |

| $A = [W_a^1;W_a^2;\cdots;W_a^m] \in {R^{m \times d}}$ |

构建用于提取短语特征的向量集合. 具体方法是: 设短语长度为x, 那么对于长度为n的句子C, 如式(1):

| $\begin{array}{l} {{{p}}_i} = max [C[i:i + x]],\;{{i = 1,2,}}\cdots{{,n - x + 1}} \end{array}$ | (1) |

其中,

|

图 1 PEMAN模型结构图 |

3.2 编码层

模型将输入层得到的句子、目标词、短语集合的词向量表示分别送入到3个Bi-LSTM中, 分别学习整个句子、目标词、短语集合的隐藏语义信息.

| $\overrightarrow {{h_c}} = \overrightarrow {LSTM} ([W_c^1;W_c^2;\cdots;W_c^n])$ | (2) |

| $\overleftarrow {{h_c}} = \overleftarrow {LSTM} ([W_c^1;W_c^2;\cdots;W_c^n])$ | (3) |

其中, 式(2)表示Bi-LSTM正向的隐藏状态输出, 用于提取句子的正向语义特征. 式(3)表示Bi-LSTM反向的隐藏状态输出, 用于提取句子反向的语义特征. 二者拼接起来得到句子表示的隐藏状态输出

| ${h_c} = [\overrightarrow {{h_c}} ,\overleftarrow {{h_c}} ]$ | (4) |

同理可得目标词表示的隐藏状态输出

| ${h_a} = [\overrightarrow {{h_a}} ,\overleftarrow {{h_a}} ]$ | (5) |

| ${h_p} = [\overrightarrow {{h_p}} ,\overleftarrow {{h_p}} ]$ | (6) |

其中,

句子中离目标词越近的情感词更可能表达目标词的情感极性. 因此, 模型在得到句子表示之后, 还应充分考虑目标词和上下文单词的位置信息.

给定长度为n的句子和长度为m的目标词, 对于句中任意一个单词

| ${v_t} = 1 - \frac{l}{{n - m + 1}}$ | (7) |

得到位置权重后, 可由式(8)计算包含位置信息权重的句子最终语义表示:

| ${h_c} = [h_c^1 * {v_1},h_c^2 * {v_2},\cdots,h_c^n * {v_n}]$ | (8) |

在分别得到句子、目标词、短语集合的语义表示

| ${I^{ctx}} = {h_c} \cdot h_a^{\rm T}$ | (9) |

对于交互矩阵

| $\alpha _{ij}^{ctx} = \frac{{\exp (I_{ij}^{ctx})}}{{\displaystyle\sum\nolimits_i {\exp (I_{ij}^{ctx})} }},\beta _{ij}^{ctx} = \frac{{\exp (I_{ij}^{ctx})}}{{\displaystyle\sum\nolimits_j {\exp (I_{ij}^{ctx})} }}$ | (10) |

| $\beta _j^{ctx} = \frac{1}{n}\sum\nolimits_i {\beta _{ij}^{ctx}} $ | (11) |

句子中所有词的注意力权重分布

| ${\gamma ^{ctx}} = {\alpha ^{ctx}} \cdot {\overline {{\beta ^{ctx}}} ^{\rm T}}$ | (12) |

同理, 对短语集合表示

| ${I^{prs}} = {h_p} \cdot h_a^{\rm T}$ | (13) |

| $\alpha _{ij}^{prs} = \frac{{\exp (I_{ij}^{prs})}}{{\displaystyle\sum\nolimits_i {\exp (I_{ij}^{prs})} }},\beta _{ij}^{prs} = \frac{{\exp (I_{ij}^{prs})}}{{\displaystyle\sum\nolimits_j {\exp (I_{ij}^{prs})} }}$ | (14) |

| $\beta _j^{prs} = \frac{1}{n}\sum\nolimits_i {\beta _{ij}^{prs}} $ | (15) |

| ${\gamma ^{prs}} = {\alpha ^{prs}} \cdot {\overline {{\beta ^{prs}}} ^{\rm T}}$ | (16) |

在输出层, 句子表示

| ${r_{ctx}} = h_c^{\rm T} \cdot {\gamma ^{ctx}}$ | (17) |

| ${r_{prs}} = h_p^{\rm T} \cdot {\gamma ^{prs}}$ | (18) |

句子最终的语义表示r由二者拼接得到, 当作最终的句子特征送入到Softmax层中, 得到结果的概率分布, 计算公式如下所示:

| $r = [{r_{ctx}};{r_{prs}}]$ | (19) |

| $y = L_{\rm Softmax} ({W_l} * r + {b_l})$ | (20) |

其中,

模型通过端到端反向传播的方式进行训练, 以交叉熵[13]作为损失函数, 并加入正则化项[14]减少过拟合, 如式(21)所示:

| $loss = \sum\nolimits_k {\sum\nolimits_{i \in C} {y_i^g \cdot \log ({y_i})} } + \lambda ||\theta |{|^2}$ | (21) |

其中, k表示训练数据集中的样本, C表示分类的类别, 本实验中C=3. λ是L2正则化的参数. 在分类器得到的结果y中, 概率最大的yi作为模型预测得到的标签. 同时, 为了进一步防止模型过拟合, 引入dropout[15].

4 实验与分析 4.1 数据集本文在SemEval2014[1]比赛的Restaurant、Laptop数据集上验证模型的效果. 目标词的情感极性分为3类: 积极情感、消极情感、中性. 数据集的统计情况如表1所示.

| 表 1 数据集总体统计 |

4.2 超参数设置

实验过程中, 文本中的单词采用300维的GloVe预训练词向量[12]进行初始化, 所有不在词向量词典中的词, 随机初始化为服从[–0.25,0.25]均匀分布的300维随机向量. 所有的权重矩阵被初始化为服从[–0.01,0.01]的均匀分布, 所有偏置量全都置为0向量.

本文模型使用Pytorch实现, 模型训练过程中采用随机梯度下降法[16]进行参数更新, 实验中使用的超参数值如表2所示.

| 表 2 超参数设置 |

4.3 结果讨论

基线模型和本文模型的实验结果如表3所示. 由表3可知, 本文提出的PEMAN模型在特定目标情感分析任务中, 相对于诸多基线模型均有不同程度提升.

| 表 3 不同模型的情感分类准确率 |

实验结果表明, 本文提出的PEMAN模型在餐馆数据集和笔记本数据集上的准确率分别达到了80.6%和74.9%, 相比基线模型有了明显提高. PEMAN模型借助句子、短语和目标词表示, 构建两个注意力网络, 有效融合句子上下文语义信息和短语级别特征, 在一定程度上解决了注意力分散等问题, 使得模型的表达能力相对基线模型有一定提升. 针对情感词为多词的情况时, 引入短语特征的PEMAN模型能更准确地挖掘词组语义, 具有更好的理解能力, 有效避免歧义.

另外, 本文选择短语长度为2~5进行对比实验, 来验证短语长度的取值对PEMAN模型表达能力的影响. 结果如表4所示.

| 表 4 短语长度的取值对本文模型效果的影响 |

由表4中结果可知, 当短语长度取3时, PEMAN模型在两个数据集上准确率分别为80.6%和74.9%, 达到了最好的效果. 当短语长度取2时, 模型准确率比大部分基线模型高. 这证明了引入的短语级别特征使得PEMAN模型的表达能力更强. 然而, 当短语长度取4或5时, 模型的准确率有明显下降, 说明当短语长度过长时, 可能导致语义特征过于抽象而降低准确率.

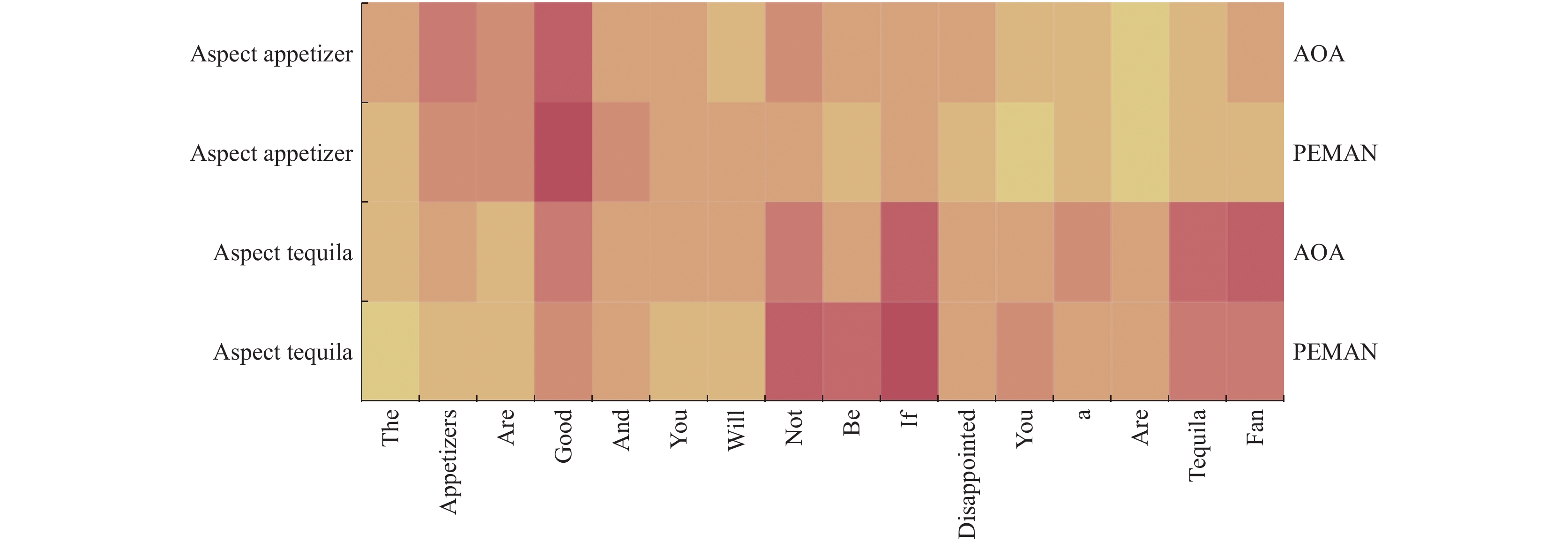

4.4 样本分析本节通过样本分析来验证PEMAN模型的改进之处, 分析模型准确率提高的原因. 在例句“The appetizers are good and you will not be disappointed if you are a Tequila fan.”中, 包含与餐馆相关的两个目标词: “appetizers”和“Tequila”. 表5展示了AOA[10]模型、PEMAN模型针对这两个目标词推理得到的情感倾向.

| 表 5 两种模型在该实例中的结果对比 |

在两种模型中, 针对不同目标词上下文信息的注意力权重分布情况如图2所示. 图中每个格子的颜色表示模型给句中每个词的权重分配情况, 颜色越深代表权重越大.

|

图 2 AOA[10]和PEMAN模型中句子的注意力权重分布 |

例句中针对“Tequila”这个目标词, 表达其情感倾向的是词组“not be disappointed”, 表达了正向的情感倾向. AOA[10]模型对于表示消极倾向的情感词“disappointed”和表示积极倾向的情感词“fan”都有较大权重, 然而其对于词组“not be disappointed”整体没有给予足够高的权重分配, 所以AOA模型对目标词“Tequila”预测了负面的情感倾向, 得到了错误的判别结果. 而PEMAN模型通过引入短语级别特征语义, 更准确地捕捉到词组“not be disappointed”所表达的积极情感倾向, 因此能够正确分类. 另外, 针对目标词“appetizers”, PEMAN模型给对应的情感词“good”分配了更高的权重, 证明短语级别特征的融合能捕捉到更多的句子语义信息, 模型的表达能力更强.

5 总结与展望特定目标情感分析是一种细粒度的情感分析任务, 旨在分析句子中特定目标的情感极性. 本文提出了融合短语特征的多注意力网络PEMAN模型. PEMAN模型通过引入短语级别特征, 构建多粒度特征融合的多注意力机制, 有效提高表达能力. 实验结果表明, 本文提出的PEMAN模型在特定目标情感分析任务的准确率有一定提升.

尽管本文的工作相比诸多基线模型有了一定进步, 但仍存在一些问题有待探索: (1)针对特定目标情感分析任务, 目前的研究工作在训练过程中同时只能对一个目标词进行计算, 未来考虑如何对多个目标词同时进行计算. (2)针对数据中可能出现的成语或口语化表达, 尝试探索如何将先验的语言学知识补充到神经网络模型中, 使模型理解能力得到进一步提升.

| [1] |

Hochreiter S, Schmidhuber J. LSTM can solve hard long time lag problems. Proceedings of the 9th International Conference on Neural Information Processing Systems. Cambridge, UK. 1996. 473–479.

|

| [2] |

Pontiki M, Galanis D, Pavlopoulos J, et al. SemEval-2014 task 4: Aspect based sentiment analysis. Proceedings of the 8th International Workshop on Semantic Evaluation. Dublin, Ireland. 2014. 27–35.

|

| [3] |

Vo DT, Zhang Y. Target-dependent twitter sentiment classification with rich automatic features. Proceedings of the 24th International Conference on Artificial Intelligence. Denver, CO, USA. 2015. 1347–1353.

|

| [4] |

Kiritchenko S, Zhu XD, Cherry C, et al. NRC-Canada-2014: Detecting aspects and sentiment in customer reviews. Proceedings of the 8th International Workshop on Semantic Evaluation. Dublin, Ireland. 2014. 437–442.

|

| [5] |

Tang DY, Qin B, Feng XC, et al. Effective LSTMs for target-dependent sentiment classification. arXiv: 1512.01100, 2015.

|

| [6] |

Wang YQ, Huang ML, Zhao L, et al. Attention-based LSTM for aspect-level sentiment classification. Proceedings of 2016 Conference on Empirical Methods in Natural Language Processing. Austin, TX, USA. 2016. 606–615.

|

| [7] |

Tang DY, Qin B, Liu T. Aspect level sentiment classification with deep memory network. arXiv: 1605.08900, 2016.

|

| [8] |

Ma DH, Li SJ, Zhang XD, et al. Interactive attention networks for aspect-level sentiment classification. arXiv: 1709.00893, 2017.

|

| [9] |

Chen P, Sun ZQ, Bing LD, et al. Recurrent attention network on memory for aspect sentiment analysis. Proceedings of 2017 Conference on Empirical Methods in Natural Language Processing. Copenhagen, Denmark. 2017. 452–461.

|

| [10] |

Huang BX, Ou YL, Carley KM. Aspect level sentiment classification with attention-over-attention neural networks. Proceedings of the 11th International Conference on Social Computing, Behavioral-Cultural Modeling and Prediction and Behavior Representation in Modeling and Simulation. Washington, WA, USA. 2018. 197–206.

|

| [11] |

Graves A, Schmidhuber J. Framewise phoneme classification with bidirectional LSTM and other neural network architectures. Neural Networks, 2005, 18(5–6): 602-610. |

| [12] |

Pennington J, Socher R, Manning C. Glove: Global vectors for word representation. Proceedings of 2014 Conference on Empirical Methods in Natural Language Processing. Doha, Qatar. 2014. 1532–1543.

|

| [13] |

Deng LY. The cross-entropy method: A unified approach to combinatorial optimization, Monte-Carlo simulation, and machine learning. Technometrics, 2006, 48(1): 147-148. DOI:10.1198/tech.2006.s353 |

| [14] |

Ioffe S, Szegedy C. Batch normalization: Accelerating deep network training by reducing internal covariate shift. Proceedings of the 32nd International Conference on International Conference on Machine Learning. Lille, France. 2015. 448–456.

|

| [15] |

Srivastava N, Hinton G, Krizhevsky A, et al. Dropout: A simple way to prevent neural networks from overfitting. The Journal of Machine Learning Research, 2014, 15(56): 1929-1958. |

| [16] |

Bottou L. Large-scale machine learning with stochastic gradient descent. Proceedings of the 19th International Conference on Computational Statistics. Paris, France. 2010. 177–186.

|

2020, Vol. 29

2020, Vol. 29