图像描述是计算机视觉领域与自然语言处理领域交叉的一项基本任务, 该任务是给定一张图像, 产生一句对应的自然语言描述, 并且具有广泛的应用, 例如为视觉有障碍的人提供帮助, 人机交互和视觉助手等. 然而, 用自然流畅的句子描述图像内容对机器来说是一项具有挑战性的任务. 它要求图像描述模型不仅识别图像中的显著对象, 而且识别这些对象之间的关系, 并使用自然语言来表达语义信息. 随着深度学习的兴起, 基于深度学习的图像描述模型逐渐发展起来. 但是目前的大部分图像描述方法都只采用了单一的注意力机制, 并且图像特征中存在冗余和不相关的信息, 这些信息会误导注意力计算过程, 使解码器生成错误的句子. 本文针对上述问题, 提出了一种新的基于双路细化注意力机制的图像描述模型, 该模型首先使用Faster R-CNN[1]目标检测算法提取图像区域特征, 然后使用空间注意力机制关注包含显著对象的区域, 同时利用通道注意力机制关注显著的隐藏单元, 该隐藏单元包含与预测单词更相关的语义信息. 在计算注意力权重时, 首先对解码器的隐藏状态应用卷积运算来过滤掉不相关的信息. 其次, 将经过注意力机制的特征输入到特征细化模块过滤掉其中的冗余信息, 并将这些细化的特征合并到模型中. 这样, 这些特征在语义上与图像内容更加相关.

2 相关工作近年来, 深度学习取得了重大进展, 研究者们提出了多种基于深度学习的图像描述模型. Vinyals等[2]提出了基于编码器-解码器的图像描述模型, 该模型借鉴了机器翻译中常用的编码器-解码器架构, 与机器翻译不同的是, 该模型使用卷积神经网络(Inception网络模型[3])作为编码器提取图像特征, 使用长短时记忆网络(LSTM)[4]作为解码器生成句子. 但是, 该模型仅在第一步使用图像特征, 而在随后的生成步骤中不使用图像特征. Wu等[5]首先利用经过微调的多标签分类器来提取图像中的属性信息, 作为指导信息来指导模型生成描述, 提高了性能. Yao等[6]首先利用经过多示例学习方法预训练的卷积神经网络提取图像中的属性信息, 同时使用卷积神经网络提取图像特征, 并且设计了5种架构来找出利用这两种表示的最佳方式以及探索这两种表示之间的内在联系.

强化学习的相关方法也被引入图像描述任务中. Ranzato等[7]提出了一种直接优化模型评价标准的方法, 该方法利用了策略梯度方法来解决评价标准不可微且难以应用反向传播的问题. 通过使用蒙特卡罗采样方法来估计预期的未来回报, 该模型使得训练阶段更加高效和稳定. Rennie等[8]提出了一种SCST训练方法, 该方法基于策略梯度强化学习算法, 并且使用模型自身解码生成的描述作为基准, 提高了训练过程的稳定性, SCST训练方法显著地提高了图像描述模型的性能并且在一定程度上解决了图像描述模型训练阶段与测试阶段不匹配的问题.

受人类视觉系统中存在的注意力机制的启发, Xu等[9]首次将注意力机制引入到图像描述模型中. 在解码阶段的每个时刻, 模型会根据解码器的隐藏状态来计算图像不同位置特征的权重. 这些权重衡量了图像区域和下一个生成的单词之间的相关性. You等[10]提出了一种新的语义注意机制, 该方法首先会提取出图像的属性信息, 在模型生成描述的每个时刻, 选择最终要的属性信息为模型提供辅助信息. Lu等[11]提出了一种自注意力机制, 该机制利用哨兵位置的概念, 当模型生成与图像内容无关的单词时, 会将注意力放在哨兵位置上, 以提高模型生成描述的准确性. Chen等[12]提出了结合空间注意力与通道注意力的图像描述模型, 与之相比, 本文使用的是经过细化的空间注意力与通道注意力, 同时本文还使用Faster R-CNN提取空间区域特征, 特征更加细化.

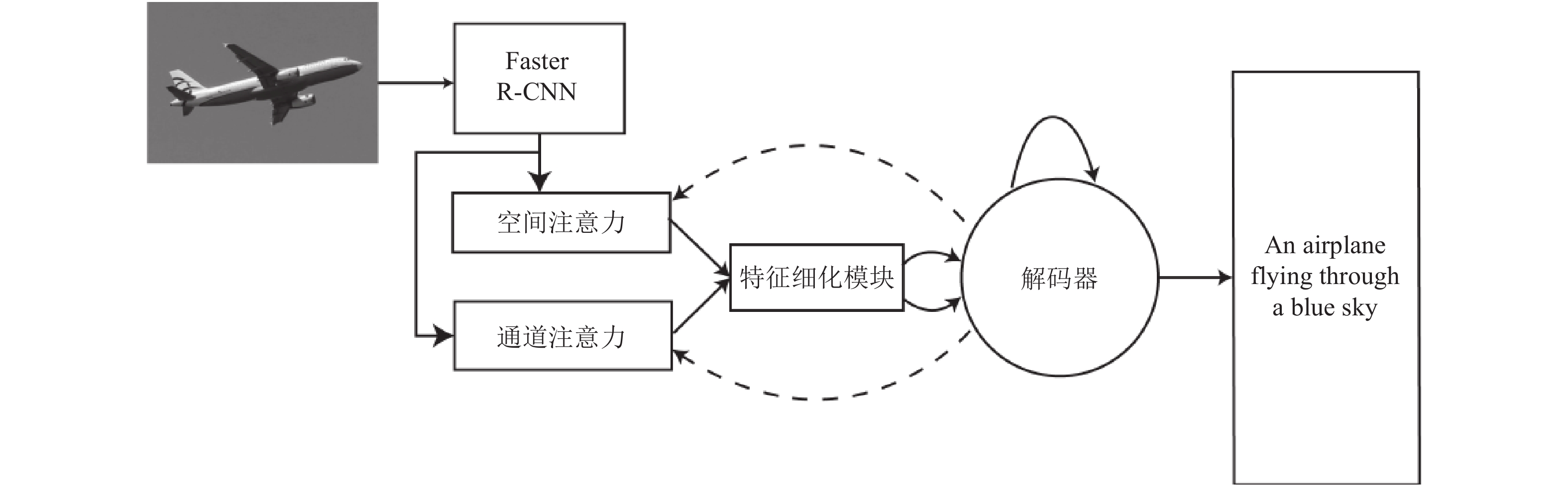

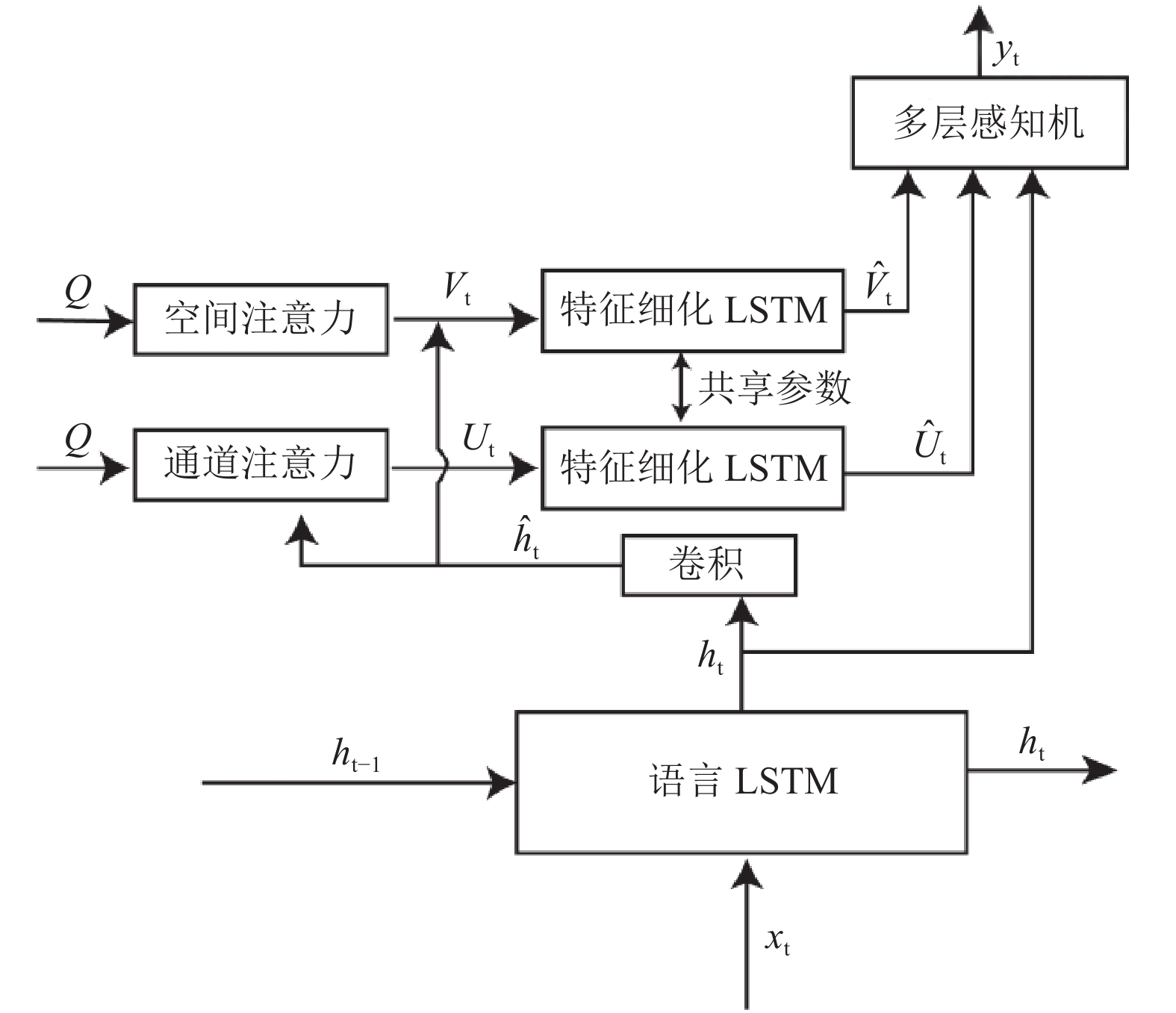

3 模型如图1所示, 本文模型包含5个基本组件: 编码器、空间注意力机制、通道注意力机制、特征细化模块和解码器. 模型的整个流程如图2所示. 首先, 编码器使用Faster R-CNN目标检测算法提取图像区域特征. 然后, 在每个时刻, 空间注意力机制与通道注意力机制分别计算对应的特征权重, 特征细化模块通过过滤冗余和不相关的图像特征来细化经过权重修正的空间图像特征和通道图像特征. 在经过细化的图像特征的指导下, 解码器在每个时刻生成一个单词.

3.1 编码器本文使用Faster R-CNN目标检测算法提取图像区域特征. Faster R-CNN引入了区域建议网络(Region Proposal Network, RPN), 提高了目标检测的准确率. 首先将图像输入到卷积神经网络中, 将高层卷积特征输入到RPN中得到建议区域, 然后再对建议区域与高层卷积特征共同使用感兴趣区域池化, 得到大小相同的特征图(14×14), 然后将这些特征图输入到另一个卷积神经网络中, 将得到的特征经过平均区域池化即可得到对应的区域特征, 最后利用非极大值抑制过滤掉置信度不高的区域. 最终可以得到L个不同区域的特征, 将这些特征集合到一起, 记作A, 如式(1)所示. 每个区域的特征包含D个通道.

| ${{A}} = \{ {{{a}}_1}, \cdots ,{{{a}}_L}\} ,\;\;{{{a}}_i} \in {{\mathbb R}^D}$ | (1) |

全局图像特征可以用局部特征的平均来近似, 如式(2)所示.

| ${{{a}}^g} = \frac{1}{L}\sum\limits_{i = 1}^L {{{{a}}_i}} $ | (2) |

随后, 将局部图像特征与全局图像特征分别输入到单层感知机内, 并且使用ReLU作为激活函数, 将这些特征投影到维度d的空间中.

| ${{{q}}_i} = {{ReLU}}({{{W}}_a}{{{a}}_i})$ | (3) |

| ${{{q}}^g} = {{ReLU}}({{{W}}_b}{{{a}}^g})$ | (4) |

式中,

|

图 1 整体框架 |

|

图 2 解码器结构 |

3.2 空间注意力模型

空间注意力机制广泛用于图像描述任务. 遵循编码器-解码器结构的传统模型仅使用全局图像特征. 基于空间注意力机制的模型更加关注图像中的显著区域, 并且能够捕捉显著区域的更多细节. 当生成与图像中物体相关的单词时, 空间注意力模型可以增加其对图像相应区域的权重. 本文模型也采用了空间注意力机制.

如图2所示, 给定局部区域特征

| ${ {\widehat{h}}_t} = {{Conv}}({{{h}}_t})\,$ | (5) |

| ${{z}}_t^s\; = {{w}}_{hs}^{\rm T}\tanh ({{{W}}_{qs}}{{Q}} + ({{{W}}_{ss}}{{\widehat {h}}_t}){{{1}}^{\rm T}})$ | (6) |

| ${{{\alpha }}_t} = {{Softmax}}({{z}}_t^s)$ | (7) |

其中, Conv是包含一个卷积层的块, 卷积层后面跟随ReLU激活函数.

| ${{{V}}_t} = \sum\limits_{i = 1}^L {{\alpha _{ti}}{{{q}}_i}} $ | (8) |

与文献[11]相同, 本文也使用解码器的当前时刻隐藏状态而不是上一时刻的隐藏状态来计算对局部图像特征的空间注意力.

3.3 通道注意力模型Zhou等[13]发现每个隐藏单元可以与不同的语义概念对齐. 然而, 在基于空间注意力的模型中, 通道特征是相同的, 忽略了语义差异. 如图2所示, 本文同时也采用了通道注意力机制. 将局部区域特征

| ${{z}}_t^c = {{w}}_{hc}^{\rm T}({{{W}}_{qc}}{{{Q}}^{\rm T}} + ({{{W}}_{sc}}{{\widehat {h}}_t}){{{1}}^{\rm T}})$ | (9) |

| ${{{\beta }}_t} = {{Softmax}}({{z}}_t^c)$ | (10) |

其中,

| ${{{U}}_t} = \sum\limits_{i = 1}^d {{\beta _{ti}}{{Q}}_i^{\rm T}} $ | (11) |

其中,

在解码生成描述的每个时刻,

通常提取到的图像特征中会包含一些冗余或与生成描述不相关的特征. 为了减少这些特征的影响, 本文设计了一个特征细化模块来细化图像特征, 过滤掉冗余的和不相关的特征. 如图2所示, 该模块使用单层LSTM作为细化模块. LSTM被命名为特征细化LSTM. 在计算关注的局部图像特征

| ${{V}}_t^\prime = {{{W}}_{vd}}{{{V}}_t}$ | (12) |

| ${{U}}_t^\prime = {{{W}}_{ud}}{{{U}}_t}$ | (13) |

| ${{h}}_n^v = {f_{\rm LSTM}}({{V}}_t^\prime ,\;{{h}}_{n - 1}^v)$ | (14) |

| ${{h}}_n^u = {f_{\rm LSTM}}({{U}}_t^\prime ,\;{{h}}_{n - 1}^u)$ | (15) |

| ${{\widehat {V}}_t} = {{h}}_n^v$ | (16) |

| ${{\widehat {U}}_t} = {{h}}_n^u$ | (17) |

其中,

LSTM通常用于现有的图像描述模型中, 因为LSTM在对长期依赖关系建模方面具有强大的力量. 本文遵循常用的LSTM结构, 基本LSTM块中的门控单元和存储单元定义如下:

| $\left\{\begin{array}{l} {{{x}}_t} = [{{{W}}_e}{y_{t - 1}};{q^g}],\;\;{\rm{ for }}\;t \ge 1 \\ {{{f}}_t} = \sigma ({{{W}}_{fx}}{{{x}}_t} + {{{W}}_{fh}}{{{h}}_{t - 1}} + {{{b}}_f}) \\ {{{i}}_t} = \sigma ({{{W}}_{ix}}{{{x}}_t} + {{{W}}_{ih}}{{{h}}_{t - 1}} + {{{b}}_i}) \\ {{{o}}_t} = \sigma ({{{W}}_{ox}}{{{x}}_t} + {{{W}}_{oh}}{{{h}}_{t - 1}} + {{{b}}_o}) \\ {{{c}}_t} = {{{f}}_t} \odot {{{c}}_{t - 1}} + {{{i}}_t} \odot \tanh ({{{W}}_{cx}}{{{x}}_t} + {{{W}}_{ch}}{{{h}}_{t - 1}} + {{{b}}_c}) \\ {{{h}}_t} = {{{o}}_t} \odot \tanh ({{{c}}_t}) \\ \end{array} \right.$ | (18) |

其中,

通过使用隐藏状态

| $p({y_t}|{y_1}, \cdots ,{y_{t - 1}},\;{{I}}) = {{Softmax}}({{{W}}_p}({{{h}}_t} + {{\widehat {U}}_t} + {{\widehat {V}}_t}))$ | (19) |

本文训练过程的第一个阶段使用交叉熵损失函数作为目标函数进行训练, 如式(20)所示, 第二个阶段使用SCST训练方法, 目标函数如式(21)所示.

| ${{{L}}_{{{XE}}}}(\theta ) = - \sum\limits_{t = 1}^T {\log } ({p_\theta }(y_t^*|y_1^*, \cdots ,y_{t - 1}^*))$ | (20) |

| ${{{L}}_R} = - {{{E}}_{{y_{1:T}}\sim{p_\theta }}}\left[ {r({y_{1:T}})} \right]$ | (21) |

式中,

在训练过程中, 将参考描述的单词序列输入到模型中, 可以得到每个时刻预测的单词概率分布, 随后计算目标函数, 进行优化.

在推理过程中, 选择每个时刻概率最大的单词作为生成的单词或者使用集束搜索(beam search), 每次选择概率最大的前k个单词作为候选, 最终输出联合概率最大的描述作为最终的描述结果.

4 实验分析 4.1 实验数据集与评价标准本文模型在用于图像描述的MS COCO数据集[14]上进行实验. COCO数据集包含82 783张用于训练的图像、40 504张用于验证的图像和40 775张用于测试的图像. 它还为在线测试提供了一个评估服务器. 本文使用文献[15]中的数据划分, 该数据划分中包含5000张用于验证的图像, 5000张用于测试的图像, 其余图像用于训练.

为了验证本文模型生成描述的质量, 并与其他方法进行比较, 本文使用了广泛使用的评价指标, 包括BLEU[16]、METEOR[17]、ROUGE-L[18]和CIDEr[19]. 本文使用文献[20]提供的评估工具来计算分数. BLEU分数衡量生成的句子和参考句子之间的n-gram精度. ROUGE-L分数测量生成的句子和参考句子之间最长公共子序列(LCS)的F-Score. METEOR评分通过添加生成的句子和参考句子之间的对应关系, 与人类的评价标准更加相关. 与上述指标不同, CIDEr评分是为图像描述设计的. 它通过计算每个n-gram的TF-IDF权重来测量生成描述与参考描述之间的一致性.

4.2 实现细节首先将COCO数据集中所有的描述转换成小写并且将描述的最大长度设置为15. 如果描述的长度超过15, 则会截断之后单词. 本文过滤掉训练集中出现不到5次的所有单词, 并且增加了四个特殊的单词. “< BOS >”表示句子的开头, “< EOS >”表示句子的结尾, “< UNK >”表示未知单词, 而“< PAD >”是填充单词. 经过这样的处理以后, 得到的字典长度为10 372.

本文将LSTM的隐藏单元的数量设置为512, 随机初始化词嵌入向量, 而不是使用预训练的词嵌入向量. 我们使用Adam优化器[21]来训练本文的模型. 在使用交叉熵训练的阶段, 基础学习率设置为

Goole NIC[2]使用编码器-解码器框架, 使用卷积神经网络作为编码器, 使用LSTM作为解码器.

Hard-Attention[9]将空间注意力机制引入图像描述模型, 根据解码器的状态动态地为图像不同区域的特征分配权重.

MSM[6]共同利用了图像属性信息与图像全局特征.

AdaAtt[11]使用了自适应注意力机制, 如果要生成的单词与图像内容无关, 则注意力放在一个虚拟的“哨兵”位置上.

文献[22]中的模型使用了视觉属性注意力并且引入了残差连接.

Att2all[8]首次提出并使用了SCST训练方法.

SCA-CNN[12]同时使用了空间与通道注意力.

4.4 实验分析如表1所示, 与SCA-CNN模型相比, 本文模型使用的双路细化注意力以及空间区域特征对生成图像描述有着更强的指导作用. 相较于只是用单一空间注意力机制的Hard-Attention模型、AdaAtt模型、文献[21]中的模型、Att2all模型相比, 本文模型使用的双路细化注意力机制, 可以生成更加紧凑, 冗余信息更少的特征, 并且除了在空间位置上施加注意力, 也在通道上施加注意力, 使得模型可以更好地利用与生成描述相关地特征.

| 表 1 本文模型与经典算法比较 |

为研究本文中不同模块的有效性, 设计了不同的模型进行比较, 实验结果见表2. 基准模型为只使用Faster R-CNN目标检测算法提取图像区域特征, 不使用注意力机制与特征细化模块, 表中的“X”表示该模型在基准模型的基础上使用该模块. 从表2中可见, 空间注意力机制、通道注意力机制、特征细化模块都可提高模型性能. 同时使用两种注意力机制的模型3相较于只使用一种注意力机制的模型2与模型1, 性能有进一步的提高, 证明本文提出的双路注意力机制的有效性. 模型5、模型6、本文算法在模型1、模型2、模型3的基础上增加了特征细化模块, 最终模型性能也有提高, 证明了特征细化模块的有效性.

| 表 2 本文模型不同模块效果比较 |

5 结论与展望

本文提出了一种新的基于双路细化注意力机制的图像描述模型. 本文模型整合了空间注意力机制和通道注意力机制. 首先使用卷积运算来过滤隐藏状态的不相关信息, 然后计算注意力. 为了对减少关注图像特征中的冗余和不相关特征的影响, 本文设计了一个特征细化模块来细化关注图像特征, 使关注图像特征更加紧凑和有区分度. 为了验证本文模型的有效性, 我们在MS COCO数据集上进行了实验, 实验结果表明, 本文提出模型性能优越.

| [1] |

Ren SQ, He KM, Girshick R, et al. Faster R-CNN: Towards real-time object detection with region proposal networks. Advances in Neural Information Processing Systems. 2015. 91–99.

|

| [2] |

Vinyals O, Toshev A, Bengio S, et al. Show and tell: A neural image caption generator. Proceedings of the 2015 IEEE Conference on Computer Vision and Pattern Recognition. Boston, MA, USA. 2015. 3156–3164.

|

| [3] |

Ioffe S, Szegedy C. Batch normalization: Accelerating deep network training by reducing internal covariate shift. arXiv preprint arXiv: 1502.03167, 2015.

|

| [4] |

Hochreiter S, Schmidhuber J. Long short-term memory. Neural Computation, 1997, 9(8): 1735-1780. DOI:10.1162/neco.1997.9.8.1735 |

| [5] |

Wu Q, Shen CH, Liu LQ, et al. What value do explicit high level concepts have in vision to language problems? Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition. Las Vegas, NV, USA. 2016. 203–212.

|

| [6] |

Yao T, Pan YW, Li YH, et al. Boosting image captioning with attributes. Proceedings of the 20017 IEEE International Conference on Computer Vision. Venice, Italy. 2017. 4894–4902.

|

| [7] |

Ranzato MA, Chopra S, Auli M, et al. Sequence level training with recurrent neural networks. arXiv preprint arXiv: 1511.06732, 2015.

|

| [8] |

Rennie SJ, Marcheret E, Mroueh Y, et al. Self-critical sequence training for image captioning. Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition. Honolulu, HI, USA. 2017. 7008–7024.

|

| [9] |

Xu K, Ba J, Kiros R, et al. Show, attend and tell: Neural image caption generation with visual attention. arXiv preprint arXiv: 1502.03044, 2015. 2048–2057.

|

| [10] |

You QZ, Jin HL, Wang ZW, et al. Image captioning with semantic attention. Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition. Las Vegas, NV, USA. 2016. 4651–4659.

|

| [11] |

Lu JS, Xiong CM, Parikh D, et al. Knowing when to look: Adaptive attention via a visual sentinel for image captioning. Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition. Honolulu, HI, USA. 2017. 375–383.

|

| [12] |

Chen L, Zhang HW, Xiao J, et al. Sca-cnn: Spatial and channel-wise attention in convolutional networks for image captioning. Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition. Honolulu, HI, USA. 2017. 5659–5667.

|

| [13] |

Zhou BL, Bau D, Oliva A, et al. Interpreting deep visual representations via network dissection. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2019, 41(9): 2131-2145. DOI:10.1109/TPAMI.2018.2858759 |

| [14] |

Lin TY, Maire M, Belongie S, et al. Microsoft coco: Common objects in context. Proceedings of European Conference on Computer Vision (ECCV 2014). Zurich, Switzerland. 2014. 740–755.

|

| [15] |

Karpathy A, Li FF. Deep visual-semantic alignments for generating image descriptions. Proceedings of the 2015 IEEE Conference on Computer Vision and Pattern Recognition. Boston, MA, USA. 2015. 3128–3137.

|

| [16] |

Papineni K, Roukos S, Ward T, et al. BLEU: A method for automatic evaluation of machine translation. Proceedings of the 40th Annual Meeting on Association for Computational Linguistics. Philadelphia, PA, USA. 2002. 311–318.

|

| [17] |

Banerjee S, Lavie A. METEOR: An automatic metric for MT evaluation with improved correlation with human judgments. Proceedings of the ACL Workshop on Intrinsic and Extrinsic Evaluation Measures for Machine Translation and/or Summarization. Ann Arbor, MI, USA. 2005. 65–72.

|

| [18] |

Lin CY. Rouge: A package for automatic evaluation of summaries. Proceedings of the Workshop on Text Summarization Branches Out. Barcelona, Spain. 2004. 74–81.

|

| [19] |

Vedantam R, Zitnick CL, Parikh D. Cider: Consensus-based image description evaluation. Proceedings of the 2015 IEEE Conference on Computer Vision and Pattern Recognition. Boston, MA, USA. 2015. 4566–4575.

|

| [20] |

Chen XL, Fang H, Lin TY, et al. Microsoft coco captions: Data collection and evaluation server. arXiv preprint arXiv: 1504.00325, 2015.

|

| [21] |

Kingma DP, Ba J. Adam: A method for stochastic optimi-zation. arXiv preprint arXiv: 1412.6980, 2015.

|

| [22] |

周治平, 张威. 结合视觉属性注意力和残差连接的图像描述生成模型. 计算机辅助设计与图形学学报, 2018, 30(8): 1536-1542, 1553. |

2020, Vol. 29

2020, Vol. 29