保障工务作业人员的人身安全是铁路运输管理的一项重要内容[1], 现有的人工监控方式存在成本高、范围小、易受环境因素影响等缺点, 由于工作失误, 人身安全事故的案例时有发生: (1)2010年5月22日14 时29分, 西昌工务段在成昆线九里~燕岗站间进行补修作业时, 2名劳务工下道避车不及时, 被通过的44116次货运列车碰撞死亡. (2)2014年5月13日4时05分, 上海局杭州工务段在沪昆下行线衢州~后溪街站间进行探伤作业时, 3名作业人员被反方向运行的中铁电化局运管公司杭州维管段接触网作业车撞上, 造成2人死亡, 1人重伤[2]. 随着微电子、网络及传感器技术的不断发展, 人类行为状态的实时连续捕获与采集已经非常便利, 对这些行为数据进行分析, 有助于深入理解人的行为模式, 在铁路工务安全监测领域具有应用价值.

早期人员行为识别主要采用基于逻辑规则的方法, 这种方法无法处理数据中包含的噪声, 模型构建困难, 识别能力较弱, 因此现在大量行为识别研究工作采用机器学习的方法[3]. 机器学习是指通过计算机学习数据中的内在规律性信息, 获得新的经验和知识, 以提高计算机的智能性, 使计算机能够像人那样去决策. 行为识别问题通常也看做机器学习中的分类问题, 近年来主要的分类算法有决策树(Decision Tree)、随机森林(Random Forest)、K最近邻(K-Nearest Neighbor, KNN)、朴素贝叶斯(Naïve Bayes)、支持向量机(Support Vector Machine, SVM)、逻辑回归(Logistic Regression)、神经网络(Neural Networks)等.

根据应用领域的不同, 目前存在大量面向行为识别的方法, 从行为监测的角度分类, 可以分为基于视觉的行为识别和基于传感器的行为识别.

基于视觉的行为识别分为基于可见光波段的人体行为识别和基于红外波段的人体行为识别两种. 由于可见光波段的人体行为数据易于获取, 基于该波段的人体行为识别研究开始较早, Zhang等人[4]通过用光流表征运动信息来定位感兴趣区域, 然后使用其方向梯度和光流直方图来描述该区域, 最后利用SVM分类器对人体的各种动作类型进行分类. Zhang等人[5]利用基于SIFT特征的ASIFT进行关键点轨迹表达以获得关于人体行为动作的全局时空信息, 再通过LDA描述特征, 最后由K-means进行分类以识别人体行为. Sowmya[6]使用BOF (Bag Of Features)算法, 对建筑工人爬梯, 砌砖, 木工, 绘画和抹灰工作进行了识别, 准确率为95%, 该算法在KTH数据集下的准确率为96%. 由于基于可见光波段的人体行为识别系统容易受到光照变换的影响, 而且在弱光照环境下不能很好地进行人体行为识别, 同时还存在遮挡以及可能的隐私泄露等其他影响因素, 因此近年来基于红外波段的人体行为识别研究越来越受到学界的重视. Jiang等人[7]首次将三维卷积神经网络应用于红外人体行为识别. Liu等人[8]提出了基于光流累计图的全局时空信息特征表达, 实现了InfAR数据集下79.25%的准确率.

基于传感器的行为识别方法由于不受场景、光线的限制, 使用灵活, 故在行为识别中得到了最为广泛的应用. Huang等人[9]使用决策树、随机森林、SVM等三种算法对生活中5类行为进行了分类识别, 并研究了腰部不同佩戴位置对于识别率的影响. Ravì等人[10]提出了一种深度学习方法, 它将从惯性传感器数据中学到的特征与来自一组浅特征的补充信息相结合, 实现了准确和实时的行为分类. Cardoso等人[11]为了解决静态数据集下识别率较高, 但在新输入数据集下识别率较低的问题, 提出了在线半监督学习, 让标签与非标签数据持续不断地训练模型, 提高了对新用户行为的识别率. Khan等人[12]通过单个三轴加速度传感器对工人行为识别做了研究, 实现了实采数据集下94%的准确率, 并证明传感器佩戴于腰部位置识别效果是最好的. Ward等人[13]模拟“木工车间”装配任务, 通过在手臂两个位置安装传感器和麦克风, 对锯切, 锤击, 锉削, 钻孔, 打磨, 打磨, 打开抽屉, 拧紧螺丝, 转动螺丝刀等9种动作做了研究, 对于加速度值和声音分别采用隐马尔科夫模型(HMMS)和线性判别分析法(LDA), 得出了在用户依赖下98%, 用户无关下87%, 用户适应下95%的识别准确率. Mekruksavanich等人[14]研究了办公室久坐行为, 使用智能手表中的加速度计和陀螺仪, 通过WEKA中的分类算法, 得出了最高93.57%的识别率.

综上来看, 基于视觉的行为识别由于对光线、视角的要求较高, 且不具备灵活便携性, 不适合铁路工务场景下的行为识别, 因此本文选择基于加速度传感器的方法, 分析并模拟了7种铁路工务人员的常见行为, 提取其时、频域特征, 最终通过WEKA平台完成了分类与测试, 整体研究思路如图1所示.

|

图 1 研究思路流程图 |

1 铁路工务人员行为识别研究 1.1 铁路工务人员行为特点分析

本文选取铁轨探伤工为代表, 对其行为进行研究. 根据媒体资料[15], 可以看出铁路探伤工的动作频率较低, 种类也比较固定, 主要分为以下七类: 上道、下道、行走、跑步、下蹲、起立、站立, 它们的特点如表1所示.

| 表 1 探伤工人行为类型及特点 |

1.2 数据采集

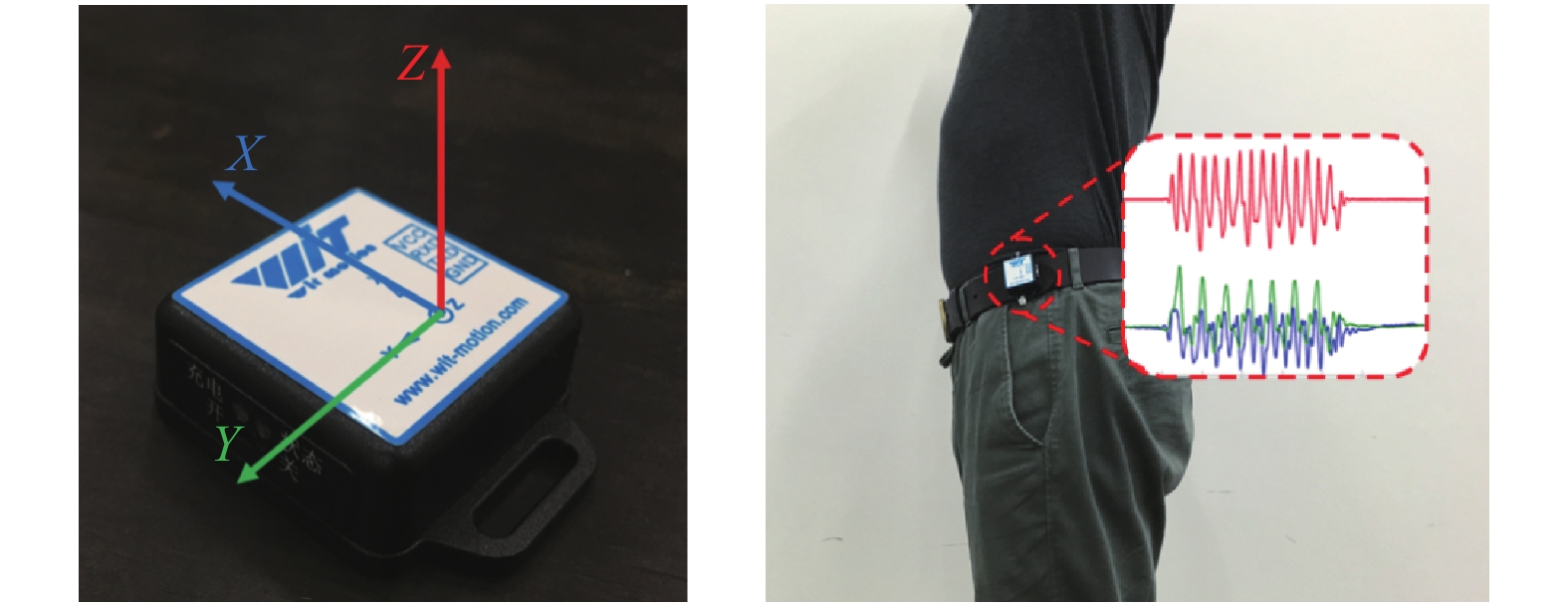

本文对人体行为数据进行采集的传感器模块型号为BWT901CL, 模块集成了三轴加速度计、三轴陀螺仪、三轴地磁场传感器, 内置电池和蓝牙, 可实时给上位机传送测量的数据. 本文采集的是三轴加速度数据.

文献[16]通过研究大量文献, 发现将加速度传感器佩戴在人身体的腰部, 比其它位置更稳固, 也更加能反映人整体的运动情况. 本文所研究的行为类型均为全身性行为, 因此采用腰部固定的方式, 如图2所示.

|

图 2 传感器BWT901CL与佩戴方式 |

1.3 数据预处理 1.3.1 倾斜矫正

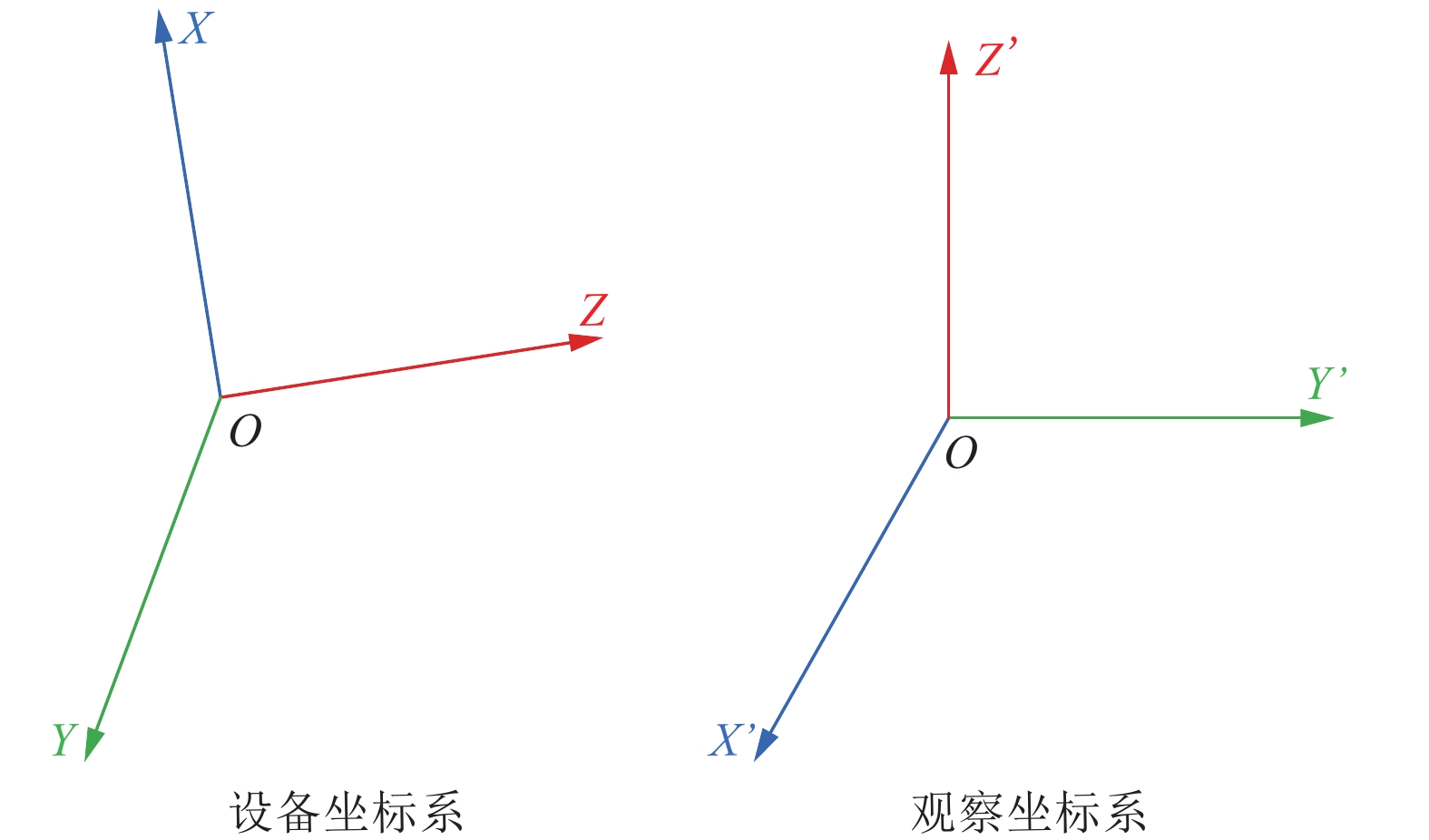

由于传感器模块在不同人体上固定的位置会略有偏差, 为避免由此带来的误差, 需要进行坐标系变换. 如图3所示, O-XYZ为设备坐标系, 相对于设备的位置不变; O-X’Y’Z’为观察坐标系, 其中OX’轴正方向对应于人体前进方向.

|

图 3 设备坐标系与观察坐标系 |

设α1 β1 γ1 α2 β2 γ2 α3 β3 γ3分别为观察坐标系的轴OX’ OY’ OZ’在设备坐标系下与OX OY OZ轴的夹角, 根据坐标转换公式(1)即可将测量值(x y z)转换为统一的观察坐标系下的值(x’ y’ z’).

| $\left[ \begin{gathered} x' \\ y' \\ z' \\ \end{gathered} \right] = \left[ \begin{gathered} {{i}} \\ {{j}} \\ {{k}} \\ \end{gathered} \right] \cdot \left[ \begin{gathered} x \\ y \\ z \\ \end{gathered} \right] = \left[ \begin{gathered} \cos \alpha 1{\rm{ }}\cos \beta 1{\rm{ cos}}\gamma {\rm{1}} \\ \cos \alpha 2{\rm{ }}\cos \beta 2{\rm{ cos}}\gamma {\rm{2}} \\ \cos \alpha 3{\rm{ }}\cos \beta 3{\rm{ cos}}\gamma {\rm{3}} \\ \end{gathered} \right] \cdot \left[ \begin{gathered} x \\ y \\ z \\ \end{gathered} \right]$ | (1) |

传感器采集到的运动数据不仅包含了人体运动产生的加速度, 同时也不可避免的含有一些噪声, 主要有: 传感器的固有噪声、传感器在运动中的晃动、重力加速度的影响产生的噪声, 为保证后面工作的顺利进行, 需要对噪声进行消除[17].

本文采用移动均值滤波算法对数据进行去噪处理. 移动均值滤波算法是一种典型的线性滤波算法, 对周期性噪声干扰有良好的抑制作用. 滤波所得的信号序列中第n个点的值yy(n), 等于原始信号中第n个点y(n)及其相邻M个点的均值, 靠近头尾部分的点在计算时, 窗长依次减小, 如公式(2)所示.

| $yy(n) = \begin{gathered} \left\{ {\begin{array}{*{20}{l}} {y(n)},&{n = 1,N} \\ {\frac{1}{{2n - 1}}\displaystyle\sum\limits_{i = 0}^{2n + 2} {y(1 + i)} },&{1 < n < \frac{{M + 1}}{2}} \\ {\frac{1}{M}\displaystyle\sum\limits_{i = (1 - M)/2}^{(M - 1)/2} {y(n + i)} },&{\frac{{M + 1}}{2} \leqslant n \leqslant \frac{{2N - M + 1}}{2}} \\ {\frac{1}{{2N - 2n + 1}}\displaystyle\sum\limits_{i = n - N}^{N - n} {y(n + i)} },&{\frac{{2N - M + 1}}{2} < n < N} \end{array}} \right. \\ \end{gathered} $ | (2) |

由于后续步骤中需要提取特征值, 而特征值一般都是基于统计量的, 这意味着需要加窗处理, 将采集到的连续信号序列分割成有限的数据集合. 根据大量研究显示, 窗长度对于行为的识别是很重要的, 它应该至少能包括行为在一个周期内的数据[18,19], 具体的长度根据传感器的采样频率以及行为周期大小决定, 本文使用的是矩形窗. 为避免错误标记, 窗口应该保持一定的重叠, 有研究[20,21]表明, 50%的重叠率对于特征选择来说是最佳的, 这意味着每一个窗口内有50%的数据和相邻窗口中是一样的. 分割后的每个样本中数据点个数即为窗长, 每个样本都给定一个标签, 表示该样本的行为类型.

1.4 提取特征直接使用来自于传感器的数据通常是不可能的, 因此可以采用提取特征值的方式, 供机器学习使用, 选择合适的特征值是实现高效识别的保证. 本文对X、Y、Z三轴的数据分别在时域和频域提取了以下7种特征. 其中N为窗口长度, Ai表示该样本中的第i个值.

(1) 均值

均值mean的计算公式为式(3), 反映了加速度值的平均大小.

| $mean = \frac{1}{N}\sum\limits_{i = 1}^N {{A_i}} $ | (3) |

(2) 标准差

标准差std的计算公式为式(4), 反映了动作的波动程度.

| $std = \sqrt {\frac{1}{{n - 1}}\sum\limits_{i = 1}^N {{{({A_i} - mean)}^2}} } $ | (4) |

(3) 最大值

max是窗口内加速度数据的最大值, 它代表了加速度值变化区间的上限. 见式(5).

| $\forall i \in \left[ {0,N} \right],{\rm{ }}\exists max \geqslant {A_i}$ | (5) |

(4) 四分位距

四分位距IQR的计算公式为式(6), 其中Q3和Q1表示将将整个窗口中的数据按升序排列并四等分之后, 第三和第一个等分点处的数据. IQR反映了窗口内加速度值的分散程度, 可以避免特殊值产生的干扰, 对均值大小相近的行为有较好的区分效果.

| $IQR = {Q_3} - {Q_1}$ | (6) |

(5) 任意两轴的相关系数

任意两轴的相关系数corr的计算公式为式(7), 其中A和A’分别表示X、Y、Z三轴中任意两轴的数据, 反映了任意两轴数据之间的线性相关程度.

| $corr(A,A') = \frac{{\operatorname{cov} (A,A')}}{{st{d_A}st{d_{A'}}}},A \ne A'$ | (7) |

(6) 偏度

峰度skewness的计算公式为式(8), 反映了加速度波形的左右偏移程度.

| $skewness = \frac{n}{{(n - 1)(n - 2)st{d^3}}}\sum\limits_{i = 1}^N {{{({A_i} - mean)}^3}} $ | (8) |

(7) FFT系数

通过快速傅里叶变换(FFT)将时域内的加速度数值转换到频域范围内, 铁路工务人员的动作频率较低, 因此只提取前三维系数, 对应公式(9)中k=0,1,2时计算的结果.

| $FFT = \sum\limits_{i = 1}^N {{A_i}{e^{ - j\frac{{2\pi }}{n}k}}} ,k = 0,1, \cdots ,N - 1$ | (9) |

本文选取了4种在行为识别研究中广泛应用的分类器进行机器学习实验, 分别是C4.5决策树、随机森林、KNN、SVM. KNN算法的优势在于分类思想简单, 且易于实现[17]. C4.5是一种较为成熟的决策树算法, 运算速度快, 分类结果直观, 实用性强[22]. 随机森林是一种组合分类算法, 通过在树的生长过程中引入随机性, 降低了决策树之间的相关度从而降低集成学习算法的泛化误差[23]. SVM是基于统计学习理论和结构风险最小化原理的监督学习算法, 主要优势在于减小了学习机的复杂性和训练集的误差并具有良好的泛化能力[14].

2 实验与结果分析 2.1 实验方案实验共采集了8人的加速度数据, 性别男, 年龄范围20~28岁, 身高范围1.6~1.8 m, 体重范围50~80 kg.

为了获知坐标系的变换矩阵, 在采集每人的数据之前, 需要按照表2的要求, 完成向量k和向量i的测定, 采集过程分别如图4(a)和(b)所示, 理论上k与i之间的夹角为90°, 但实际测量中人体姿态很难保持绝对的标准, 因此夹角在87°~93°范围内的, 都视为合理的值, 向量j的计算公式为式(10).

| $\begin{split} {{j}} &= {{k}} \times {{i}} = \left( {{x_3}{y_3}{z_3}} \right) \times \left( {{x_1}{y_1}{z_1}} \right) \\ & = ({y_3}{z_1} - {y_1}{z_3},{z_3}{x_1} - {z_1}{x_3},{x_3}{y_1} - {x_1}{y_3}) \end{split} $ | (10) |

|

图 4 向量k与i的测定 |

| 表 2 坐标系变换矩阵需要的采集量及要求 |

每人完成7种动作, 每种动作采集50个样本, 实验是在与现场基本相同的条件下完成的, 其中上道和下道的坡度符合铁路路肩的坡度标准, 其余动作均在标准铁轨下完成. 如图5所示, 从左到右依次为下道、起立、跑步、下蹲、站立、上道、行走.

|

图 5 七种行为数据的采集 |

实验预处理阶段主要的参数设置如表3所示, 其中滤波器窗长是通过MATLAB软件对采集数据进行滤波实验后, 确定的一个能使波形平滑但又不过于失真的值. 由于样本采集到的动作完成时间最多不超过2 s, 所以滑动窗口取128, 即2.56 s, 足够包含至少一个动作, 同时符合2的倍数的要求, 便于FFT的计算.

| 表 3 预处理阶段主要参数设置 |

2.2 分类结果及分析

本文使用怀卡托大学的开源机器学习平台WEKA, 选择J48、RandomForest、IBk和LibSVM四种分类器, 首先根据网格搜索法GridSearch找到最优参数, 如表4所示, 然后通过10折交叉验证, 具体做法是将带有真实行为标签的加速度特征值集合分成10份, 轮流将每1份作为测试集, 其余9份作为训练集, 将得到10次分类精度的平均值作为该方法的精度估计[22]. 表5给出了四种算法对于这7种行为的平均识别准确率, 表6~表9中是各算法具体的分类混淆矩阵, 行表示的是样本的真实类型, 列表示的是分类器预测的类型, 例如表6第一行数据表示: 240个下道样本中, 有237个被识别为下道, 1个被识别为跑步, 1个被识别为站立, 以及1个被识别为上道.

| 表 4 分类器与参数设置 |

从表5所示的平均识别准确率来看, 四种算法对于特定的7种动作均有较高的识别率, 其中SVM算法是表现最好的, 而C4.5决策树算法相对最弱.

| 表 5 四种算法的平均识别准确率(%) |

由表6可知, C4.5决策树算法建立的模型在分类时, 对每一种类型的样本均存在一定的错误分类. 这主要是因为决策树的生长过程采用的是贪婪算法, 如果不控制树的生长, 会很容易出现过拟合, 为了解决这个问题, C4.5算法包含了剪枝的功能, 但是剪枝又会增加一定的错误率, 因此是一种折中处理, 最后得到的是一棵综合指标较优的树, 这个结果是合理的.

| 表 6 C4.5决策树的分类混淆矩阵 |

由表5、表7可知, 随机森林算法无论是总体平均识别率, 还是对于各类型样本的分类正确率, 均优于C4.5决策树. 随机森林通过随机对训练样本、特征值进行自举重采样, 并建立很多决策树组成“森林”, 最后让多棵树投票进行决策, 相较于决策树算法, 具有更好的泛化能力和分类表现.

| 表 7 随机森林的分类混淆矩阵 |

由表8可知, KNN算法在本次实验条件下具有很好的分类表现, 除了“起立”和“下蹲”两种行为会出现混淆以外, 其余行为均识别正确. 但是, 由于KNN在进行分类时, 需要将新样本与所有已知样本进行比较和排序, 当训练样本数量较大时, 计算量会很大, 这是在推广到实际应用时应该考虑到的因素.

| 表 8 KNN的分类混淆矩阵 |

表9展示了SVM算法的分类结果, 可见容易混淆的行为仍然是“起立”和“下蹲”. 在实验过程中发现, SVM的表现主要受惩罚系数cost、gamma系数、核函数的选择以及样本是否过归一化的影响, 表中给出的是经过参数调优和归一化之后得到的最优结果.

| 表 9 SVM的分类混淆矩阵 |

从4个混淆矩阵来看, 铁路探伤工7种主要行为中, “起立”和“下蹲”是最容易出现混淆的, 原因主要是这两类行为本身比较类似, 现有特征值代表性还不够, 在今后的工作中应该提取更加能反映二者特性的特征值或者进一步扩大数据集. 本文研究的4种算法对其余5种行为的识别均较好, 在实际的工程应用中, 可根据系统的架构、软硬件性能等具体情况, 选取最合适的算法.

3 结论本文使用加速度传感器, 采集了模拟条件下钢轨探伤工的7种行为数据, 提取了27维动作特征, 通过对比C4.5决策树、随机森林、KNN、SVM四种分类算法采用10折交叉验证得出的识别结果, SVM算法的识别准确率最高, 为99.2%, 在该数据集下有非常好的识别效果. 该研究为消除铁路现场作业人员的行为安全隐患具有一定工程应用价值.

| [1] |

中国铁路总公司. TG/GW101-2014 普速铁路工务安全规则. 北京: 中国铁路总公司运输局, 2014.

|

| [2] |

黄洪超. 关于加强铁路现场作业人员安全防护问题的研究. 工程技术, 2016, 18: 261-262. |

| [3] |

林强, 田双亮. 行为识别与智能计算. 西安: 西安电子科技大学出版社, 2016. 100–101.

|

| [4] |

Zhang HB, Li SZ, Guo F, et al. Real-time human action recognition based on shape combined with motion feature. Proceedings of 2010 IEEE International Conference on Intelligent Computing and Intelligent Systems. Xiamen, China. 2010. 633–637.

|

| [5] |

Zhang Z, Liu J. Recognizing human action and identity based on affine-SIFT. Proceedings of 2012 IEEE Symposium on Electrical & Electronics Engineering. Kuala Lumpur, Malaysia. 2012. 216–219.

|

| [6] |

Sowmya KS. Construction workers activity detection using BOF. Proceedings of 2017 International Conference on Recent Advances in Electronics and Communication Technology. Bangalore, India. 2017. 159–163.

|

| [7] |

Jiang ZL, Rozgic V, Adali S. Learning spatiotemporal features for infrared action recognition with 3D convolutional neural networks. Proceedings of 2017 IEEE Conference on Computer Vision and Pattern Recognition Workshops. Honolulu, HI, USA. 2017. 309–317.

|

| [8] |

Liu Y, Lu ZY, Li J, et al. Global temporal representation based CNNs for infrared action recognition. IEEE Signal Processing Letters, 2018, 25(6): 848-852. DOI:10.1109/LSP.2018.2823910 |

| [9] |

Huang YC, Yi CW, Peng WC, et al. A study on multiple wearable sensors for activity recognition. Proceedings of 2017 IEEE Conference on Dependable and Secure Computing. Taipei, China. 2017. 449–452.

|

| [10] |

Ravì D, Wong C, Lo B, et al. A deep learning approach to on-node sensor data analytics for mobile or wearable devices. IEEE Journal of Biomedical and Health Informatics, 2017, 21(1): 56-64. DOI:10.1109/JBHI.2016.2633287 |

| [11] |

Cardoso HL, Moreira JM. Human activity recognition by means of online semi-supervised learning. Proceedings of the 2016 17th IEEE International Conference on Mobile Data Management. Porto, Portugal. 2016. 75–77.

|

| [12] |

Khan SH, Sohail M. Activity monitoring of workers using single wearable inertial sensor. Proceedings of 2013 International Conference on Open Source Systems and Technologies. Lahore, Pakistan. 2013. 60–67.

|

| [13] |

Ward JA, Lukowicz P, Troster G, et al. Activity recognition of assembly tasks using body-worn microphones and accelerometers. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2006, 28(10): 1553-1567. DOI:10.1109/TPAMI.2006.197 |

| [14] |

Mekruksavanich S, Hnoohom N, Jitpattanakul A. Smartwatch-based sitting detection with human activity recognition for office workers syndrome. Proceedings of 2018 International ECTI Northern Section Conference on Electrical, Electronics, Computer and Telecommunications Engineering. Chiang Rai, Thailand. 2018. 160–164.

|

| [15] |

秦皇岛电视台. 铁路探伤工: 忙碌的" 轨道医生”. http: //v.pptv.com/show/ia9BZ1j6kFFK1M5s.html. [2018-11-15]

|

| [16] |

Cornacchia M, Ozcan K, Zheng Y, et al. A survey on activity detection and classification using wearable sensors. IEEE Sensors Journal, 2017, 17(2): 386-403. DOI:10.1109/JSEN.2016.2628346 |

| [17] |

杨博. 基于智能移动终端的人体运动识别技术研究与应用[硕士学位论文]. 成都: 西南交通大学, 2017.

|

| [18] |

Siirtola P, Röning J. Ready-to-use activity recognition for smartphones. Proceedings of 2013 IEEE Symposium on Computational Intelligence and Data Mining. Singapore, 2013. 59–64.

|

| [19] |

Álvarez de la Concepción MA, Soria Morillo LM, Gonzalez-Abril L, et al. Discrete techniques applied to low-energy mobile human activity recognition. A new approach. Expert Systems with Applications, 2014, 41(14): 6138-6146. |

| [20] |

Bayat A, Pomplun M, Tran DA. A study on human activity recognition using accelerometer data from smartphones. Procedia Computer Science, 2014, 34: 450-457. DOI:10.1016/j.procs.2014.07.009 |

| [21] |

Ravi N, Dandekar N, Mysore P, et al. Activity recognition from accelerometer data. Proceedings of the 17th Conference on Innovative Applications of Artificial Intelligence. Pittsburgh, PA, USA. 2005.

|

| [22] |

强茂山, 张东成, 江汉臣. 基于加速度传感器的建筑工人施工行为识别方法. 清华大学学报(自然科学版), 2017, 57(12): 1338-1344. |

| [23] |

Geurts P, Ernst D, Wehenkel L. Extremely randomized trees. Machine Learning, 2006, 63(1): 3-42. DOI:10.1007/s10994-006-6226-1 |

2019, Vol. 28

2019, Vol. 28