2. 中国科学院大学, 北京 100049;

3. 中国科学院 计算机网络信息中心, 北京 100190

2. University of Chinese Academy of Sciences, Beijing 100049, China;

3. Computer Network Information Center, Chinese Academy of Sciences, Beijing 100190, China

在计算机视觉与图像处理工作中, 图像恢复是该领域的一个重要问题之一, 在过去的几十年里, 该问题得到了广泛的研究[1–7]. 在现实生活中, 由于数字图像含有丰富的科学信息, 所以图像处理已经被应用到人类生活的方方面面. 例如: 在医学领域, 通过图像处理技术, 研究人员可以通过图像去燥算法把一幅受噪声污染或者信息缺失的图像从中恢复出来, 从而提供清晰的图像帮助医生做出准确地医学诊断, 从而实现实际意义的“精准医疗”. 在自然保护区领域, 研究学者可以通过对相关保护区域遥感图像的分析, 进行濒危物种的保护, 从而帮助自然保护工作者做出精准决策. 在气象农业领域, 通过对遥感图像进行去燥处理, 从而可以对其进行图像分析, 可以对土地覆盖分类、植被分布、病虫害防治等做出辅助决策. 在现实生活的图像处理技术中, 图像的质量是任何研究的重点, 图像去燥就是提高图像质量的重要环节.

1 图像恢复概述基于变分图像去燥算法可以有如下的表达式, 设

| ${\bf{f}} = {\bf{Au}} + {\bf{n}}$ | (1) |

其中,

| ${\bf{u}} = \mathop {\arg \min }\limits_{\bf{u}} \left\{ {\left\| {{\bf{f}}} - {{\bf{Au}}} \right\|_2^2 + \lambda {\bf{R}}\left( {\bf{u}} \right)} \right\}$ | (2) |

其中,

| ${\bf{u}} = \mathop {\arg \min }\limits_{\bf{u}} \left\{ {{{\left\| {{\bf{f}}} - {{\bf{Au}}} \right\|}_{{{0 \; {\rm {or}} \; 1}}}} + \lambda {\bf{R}}\left( {\bf{u}} \right)} \right\}$ | (3) |

著名的正则方法是变分正则[8], 其中凸变分正则要求该模型算法解存在并且唯一. 在最近几年中, 人们为了得到恢复后的图像能保持更多的图像细节信息, 从而相关学者尝试应用非凸非光滑优化正则方法, 来保持图像的细节信息. 例如: 在变分框架和统计学习框架中, 相关学者引入了非凸非光滑优化[9,10], 如文献[11,12]对该现象给出了数值例子, 文献[13]对该现象给出了理论解释.

在过去的几十年中, 相关学者对非凸非光滑优化正则方法, 提出了许多数值解法, 如最小二次方重权迭代法[11], 半二次算法[14] 等. 在本文中, 我们利用非凸非光滑优化来对脉冲噪声进行去除处理. 为了处理非凸非光滑性, 本文采用了多阶凸松弛方法对模型进行求解[15], 从其数值例子可以看出, 其近似解比标准的L1凸或者L1凸松弛方法的逼近解好了许多, 虽然该方法仅导致原始非凸问题的局部最优解, 但该局部解是对初始凸松弛的全局解的改进. 此外, 因为每个阶段都是凸优化问题, 所以该方法在计算上是高效的.

2 相关工作从著名的贝叶斯(MAP)模型出发, 对于观察到的图像

| ${\hat{\bf u}} = \mathop {\arg \max }\limits_{\bf{u}} p\left( {{\bf{u}} | {\bf{f}}} \right)$ | (4) |

在求解过程中把观察到的图像

利用贝叶斯法则, 可以得到如下表达式:

| $\begin{array}{l}{\hat{\bf u}} = \mathop {\arg \max }\limits_{\bf{u}} p\left( {{\bf{u}} | {\bf{f}}} \right)\\\;\; \;\; = \mathop {\arg \max }\limits_{\bf{u}} \displaystyle\frac{{p\left( {{\bf{f}} | {\bf{u}}} \right)p\left( {\bf{u}} \right)}}{{p\left( {\bf{f}} \right)}}\\\;\; \;\; = \mathop {{\mathop{\rm argmin}\nolimits} }\limits_{\bf{u}} - \log \left( {{\bf{f}} | {\bf{u}}} \right) - \log \left( {\bf{u}} \right)\\\;\; \;\; = \mathop {{\mathop{\rm argmin}\nolimits} }\limits_{\bf{u}} - \int\limits_\Omega {\log p\left( {{\bf{f}}\left( {\bf{x}} \right)|{\bf{u}}\left( {\bf{x}} \right)} \right)} dx - \log p\left( {\bf{u}} \right)\end{array}$ | (5) |

例如, 假设观察到的图像

| ${\hat{\bf u}} = \mathop {{ \arg \min } }\limits_{\bf{u}} \left\| {{\bf{f}} - {\bf{u}}} \right\|_1^2 + p\left( {\bf{u}} \right)$ | (6) |

其中, 我们假设

| $p\left( {\bf{u}} \right) = \exp \left( { - \gamma \int\limits_\Omega {\phi \left( {{\bf{u}}\left( {\bf{x}} \right)} \right)} } \right)d{\bf{x}}$ | (7) |

其中

| ${\hat{\bf u}} = \mathop {{ \arg \min } }\limits_{\bf{u}} \left\{ {\frac{{{\gamma _1}}}{2}\left\| {{\bf{f}} - {\bf{Au}}} \right\|_2^2 + \frac{\alpha }{2}\left\| {\nabla {\bf{u}}\left( {\bf{x}} \right)} \right\|_2^2 + {{\left\| {\nabla {\bf{u}}\left( {\bf{x}} \right)} \right\|}_1}} \right\}$ | (8) |

其中,

由于L1-范数能很好的拟合脉冲信号, 所以为了有效的去除脉冲信号, L1数据保真项在如下的文章中被广泛使用[19–21], 例如: 基于TVL1模型, 相关学者提出了如下的脉冲信号去除模型STVL1 (Squares TVL1):

| ${\hat{\bf u}} = \mathop {{\mathop{\rm argmin}\nolimits} }\limits_{\bf{u}} \left\{ {\frac{{{\gamma _1}}}{2}{{\left\| {{\bf{f}} - {\bf{Au}}} \right\|}_1}{\rm{ + }}\frac{\alpha }{2}\left\| {\nabla {\bf{u}}\left( {\bf{x}} \right)} \right\|_2^2 + {{\left\| {\nabla {\bf{u}}\left( {\bf{x}} \right)} \right\|}_1}} \right\}$ | (9) |

为了去除混合噪声, Shi[7]联合了式(8)、(9)提出了如下的去燥模型:

| ${\hat{\bf u}} = \mathop {{ \arg \min } }\limits_{\bf u} \left\{ {\begin{array}{*{20}{c}} {\displaystyle\frac{{{\gamma _1}}}{2}{{\left\| {{\bf{f}} - {\bf{Au}}} \right\|}_1} + \displaystyle\frac{{{\gamma _2}}}{2}\left\| {{\bf{f}} - {\bf{Au}}} \right\|_2^2} \\ { + \displaystyle\frac{\alpha }{2}\left\| {\nabla {\bf{u}}\left( {\bf{x}} \right)} \right\|_2^2 + {{\left\| {\nabla {\bf{u}}\left( {\bf{x}} \right)} \right\|}_1}} \end{array}} \right\}$ | (10) |

但是, 这些模型虽然能去除脉冲噪声, 但是还有很大的提升空间, 所以我们基于以上的几个模型, 提出了如下的脉冲噪声去除模型:

| ${\hat{\bf u}} = \mathop {{ \arg \min } }\limits_{\bf{u}} \left\{ {\lambda {{\left\| {{\bf{f}} - {\bf{Au}}} \right\|}_1} + \frac{\alpha }{2}\left\| {\nabla {\bf{u}}\left( {\bf{x}} \right)} \right\|_2^2 + p\left( {\bf{u}} \right)} \right\}$ | (11) |

其中,

| $p\left( {\bf{u}} \right) = \exp \left( { - \gamma \int\limits_\Omega {\phi \left( {{\bf{u}}\left( {\bf{x}} \right)} \right)} } \right)d{\bf{x}} = \int {\phi \left( {\left| {\nabla {\bf{u}}} \right|} \right)} d{\bf{x}}$ | (12) |

为Gibbs先验, Gibbs先验中我们选择

| $\phi \left( t \right) = \arctan \left( {\frac{{1 + 2\rho \left| {{t}} \right|}}{{\sqrt 3 }}} \right) - \frac{\pi }{6}$ | (13) |

我们利用变量分离技术和ADMM方法[10,22]对模型进行求解,同时为了处理非凸非光滑正则项, 我们应用了多阶凸松弛方法对模型进行求解, 虽然该方法仅导致原始非凸问题的局部最优解, 但该局部解是对初始凸松弛的全局解的改进. 此外, 因为每个阶段都是凸优化问题, 所以该方法在计算上是高效的.

引入中间变量,

| $\left\{\begin{array}{*{20}{l}} {\mathop {\min }\limits_{{\bf{u}}, {\bf{h}}, {\bf{d}}} \left\{ {\lambda \int\limits_\Omega {\left| {\bf{h}} \right|d{\bf{x}} + \displaystyle\frac{\alpha }{2}\left\| {\nabla {\bf{u}}} \right\|_2^2 + \int\limits_\Omega {\phi \left( {\left| {\bf{d}} \right|} \right)d{\bf{x}}} } } \right\}} \\ {{\rm{s}}{\rm{.t}}.\;\; {\bf{h}} = {\bf{f}} - {\bf{Au}},\nabla {\bf{u}} = {\bf{d}}} \end{array}\right.$ | (14) |

求解(14)等价于求解如下的无约束问题:

| $\begin{array}{l}\left( {{{\bf{u}}^{k + 1}},{{\bf{h}}^{k + 1}},{{\bf{d}}^{k + 1}}} \right){\rm{ = }}\\ \mathop {\arg \min }\limits_{{\bf{u}},{\bf{h}},{\bf{d}}} \left\{ {\begin{array}{*{20}{c}}{\lambda \int\limits_\Omega {\left| {\bf{h}} \right|d{\bf{x}} + \displaystyle\frac{\alpha }{2}\left\| {\nabla {\bf{u}}} \right\|_2^2 + \int\limits_\Omega {\phi \left( {\left| {\bf{d}} \right|} \right)d{\bf{x}}{\rm{ + }}} } }\\{\displaystyle\frac{{{\gamma _1}}}{2}\left\| {{\bf{h}} - \left( {{\bf{f}} - {\bf{Au}}} \right) - {\bf{b}}_1^k} \right\|_2^2 + \displaystyle\frac{{{\gamma _2}}}{2}\left\| {{\bf{d}} - \nabla {\bf{u}} - {\bf{b}}_2^k} \right\|_2^2}\end{array}} \right\}\end{array}$ | (15) |

其中,

| ${\bf{b}}_1^{k + 1} = {\bf{b}}_1^k + \left( {{\bf{f}} - {{\bf{Au}}^{k + 1}}} \right) - {{\bf{h}}^{k + 1}}$ | (16) |

| ${\bf{b}}_2^{k + 1} = {\bf{b}}_2^k + \nabla {{\bf{u}}^{k + 1}} - {{\bf{d}}^{k + 1}}$ | (17) |

通过变量分离技术可以得到如下关于

| ${{\bf{u}}^{k + 1}} \in \mathop {\arg \min }\limits_{\bf{u}} \left\{ {\begin{array}{*{20}{c}} {\displaystyle\frac{\alpha }{2}\left\| {\nabla u} \right\|_2^2 + \frac{{{\gamma _1}}}{2}\left\| {{\bf{h}} - \left( {{\bf{f}} - {\bf{Au}}} \right) - {\bf{b}}_1^k} \right\|_2^2} \\ { + \displaystyle\frac{{{\gamma _2}}}{2}\left\| {{{\bf{d}}^k} - \nabla {\bf{u}} - {\bf{b}}_2^k} \right\|_2^2} \end{array}} \right\}$ | (18) |

优化值

| $\begin{array}{l}\left( {\alpha + {\gamma _2}} \right) {\Delta {\bf{u}}} + {\gamma _1}{{\bf{A}}^{\rm{T}}}{\bf{Au}}{\rm{ = }}{\gamma _1}{{\bf{A}}^{\rm{T}}}\left( {{\bf{h}} - {\bf{f}} - {\bf{b}}_1^k} \right) + {\gamma _2}div\left( {{{\bf{d}}^k} - {\bf{b}}_2^k} \right)\end{array}$ | (19) |

可以利用高斯赛德尔迭代或者FFT对上式进行求解. 这里选择FFT对上式进行求解, 求解结果如下:

| ${{\bf{u}}^{k + 1}} = {\cal{F}^{ - 1}}\left( {\frac{{{\gamma _1}\cal{F}\left( {{{\bf{A}}^{\bf{T}}}\left( {{{\bf{h}} - {\bf{f}} - {\bf{b}}}_1^k} \right)} \right) + {\gamma _2}\cal{F}\left( {div\left( {{{\bf{d}}^k} - {\bf{b}}_2^k} \right)} \right)}}{{{\gamma _1}\cal{F}\left( {{{\bf{A}}^{\rm{T}}}{\bf{A}}} \right) + \left( {\alpha + {\gamma _2}} \right)\cal{F}\left( {{\Delta }} \right)}}} \right)$ | (20) |

| ${{\bf{h}}^{k + 1}} = shrink\left( {{\bf{f}} + {\bf{b}}_1^k - {\bf{A}}{{\bf{u}}^{k + 1}},\frac{\lambda }{{{\gamma _1}}}} \right)$ | (21) |

其中, 收缩算子定义如下:

| $shrink\left( {{s_\alpha },{t_\alpha }} \right) = \frac{{{s_\alpha }}}{{\left| {{s_\alpha }} \right|}}\max \left( {\left| {{s_\alpha }} \right| - {t_\alpha },0} \right)$ | (22) |

通过变量分离技术, 为了得到

| $\mathop {\min }\limits_{\bf{d}} \left\{ {\int {\phi \left( {\left| {\bf{d}} \right|} \right)dx + \frac{{{\gamma _2}}}{2}\left\| {{\bf{d}} - {\bf{s}}} \right\|_2^2} } \right\}$ | (23) |

其中,

| ${{\bf{d}}^{k + 1,l + 1}} = shrink\left( {{\bf{s}},\frac{{{{\bf{v}}^{k + 1,l}}}}{\gamma }} \right)$ | (24) |

其中,

| ${\bf{v}}_1^{k + 1,l + 1} = \frac{1}{{{{\left( {1 + \rho \left| {{{\bf{d}}^{k + 1,l + 1}}} \right|} \right)}^2}}}$ | (25) |

为了检验对脉冲噪声的去噪效果, 设计了如下算法1.

算法1. 脉冲噪声去除算法

1) 初始化: 设k=0, 选择

While “not converged” do

2) 计算

3) 计算

4) 计算

5) 间乘子更新:

End while

4 实验分析本节中, 我们对该模型有效性进行了实验. 首先我们使用遗传算法选择模型的最优参数, 然后我们在多个不同噪声图像上与其他模型的去噪声效果进行比较. 但是遗传算法可能陷入局部极值, 所以我们对本文防止遗传算法陷入局部极值做了如下的处理. 因为, 产生局部收敛的原因之一是群体中多样性过早的减少, 使得遗传算法的搜索空间大大减少, 虽然到现在为止遗传算法对参数选择的控制还没有理论指导, 但是我们可以用以下方法加以改进, 在计算多样度时, 保证群体中所有个体在某一基因位取相等值的个数不小于一定阈值, 才允许多样度的计算有贡献.

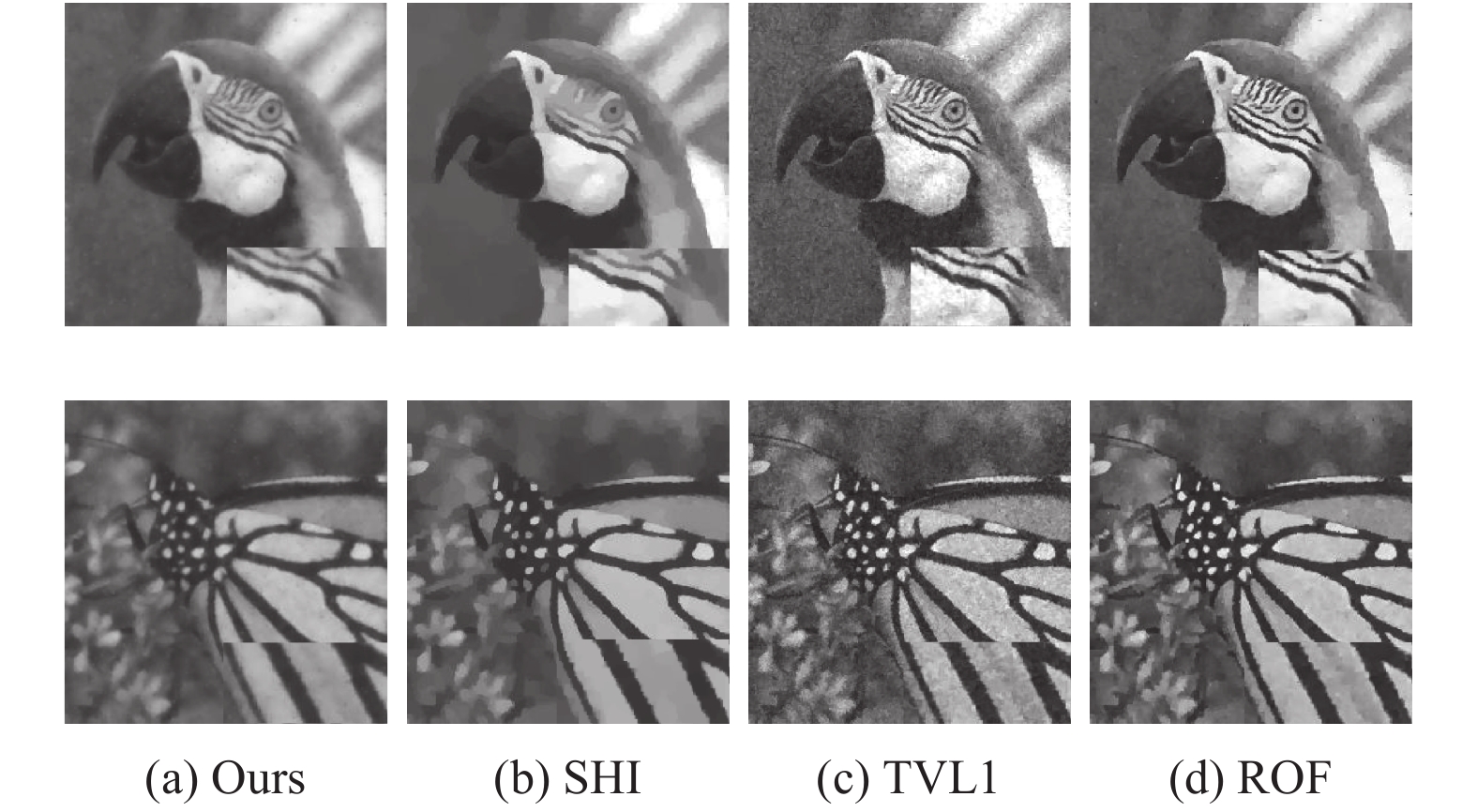

本次实验中我们与TVL1, ROF以及论文SHI中的方法进行了比较, 使用的图片有Lina, Cameraman, parrots, parrots. 本次实验主要比较了图像去燥之后的ISNR, PSNR值如下:

| $PSNR = 20{\log _{10}}\frac{{255mn}}{{{{\left\| {\tilde u - u} \right\|}_2}}}$ | (26) |

| $ISNR = 10*{\log _{10}}\frac{{\sum {_{i,j}{{\left( {{u_{i,j}} - {f_{i,j}}} \right)}^2}} }}{{\sum {_{i,j}{{\left( {{u_{i,j}} - {{\tilde u}_{i,j}}} \right)}^2}} }}$ | (27) |

该部分, 我们主要讨论如何对参数进行选择, 在我们提出的模型算法中, 共有5个参数需要进行选择. 通常参数选择的方法有两种: 第一种是通过实验人员的经验来设置[19–21]; 第二种方法是固定几个参数的值, 修改其他参数的值来获得最优参数[7].

本次实验中, 我们使用了遗传算法来寻找最优的参数. 在我们的模型中, 需要选择的参数是(a, b, c, d,…), 其中(a>0, b<0, d>0). 我们的目标是在参数空间中选择一个最优点. 为此, 我们假设最优点为使得在训练集P上的平均ISNR值最大的参数, 遗传算法的目标函数为:

| $\left\{ {\begin{aligned}& {P = \left\{ {{p_1},{p_2},\cdots,{p_n}} \right\}}\\& {O\left( {a,b,c,d} \right) = \sum\limits_{p \in P} {ISNR\left( {M\left( {p|a,b,c,d} \right)} \right)} }\end{aligned}} \right.$ | (28) |

其中, pi为训练集中的图片, a, b, c, d为需要选择的模型参数, M代表提出模型, O为遗传算法的目标函数.

在遗传算法中, 我们设置染色体个数为20条, 遗传率为85%, 繁殖代数为400. 根据运行结果, 我们选择的最优参数为:

| $\alpha = 1.7,{\gamma _1} = 15,{\gamma _2} = 0.3,\lambda = 3,\rho = 0.32.$ |

本文中, 我们对不同模型在纯脉冲噪声图像上的的去燥效果效果进行比较. 其中脉冲噪声主要考虑了椒盐噪声, 噪声level分别为0.01, 0.1, 0.4, 其中4种模型对不同级的椒盐噪声图像去燥的ISNR、PSNR结果如表1所示, 去燥后的图片如图1所示.

| 表 1 本模型与其他经典模型对脉冲噪声去燥效果指标对照 |

|

图 1 模型对脉冲噪声去燥效果图 |

5 总结与展望

本文综合TVL1、ROF、模型STVL1(Squares TVL1)、Shi-模型, 提出了非凸非光滑关于脉冲噪声去除模型, 并使用变量分离技术的ADMM算法对模型进行求解, 为了处理模型的非凸非光滑项, 本文应用了多阶凸松弛方法对子问题进行了求解, 利用遗传算法对模型参数进行选择, 通过在不同图片及不同噪声上的大量实验表明, 该模型的鲁棒性、运行时间和ISNR、PSNR都优于其他三种模型. 并且该模型能够保持图像的局部信息具有更好的可视化质量.

| [1] |

Buades A, Coll B, Morel JM. A non-local algorithm for image denoising. Proceedings of 2005 IEEE Computer Society Conference on Computer Vision and Pattern Recognition. San Diego, CA, USA. 2005, 2: 60–65.

|

| [2] |

Elad M, Aharon M. Image denoising via sparse and redundant representations over learned dictionaries. IEEE Transactions on Image Processing, 2006, 15(12): 3736-3745. DOI:10.1109/TIP.2006.881969 |

| [3] |

Dabov K, Foi A, Katkovnik V, et al. Image denoising by sparse 3-D transform-domain collaborative filtering. IEEE Transactions on Image Processing, 2007, 16(8): 2080-2095. DOI:10.1109/TIP.2007.901238 |

| [4] |

Dong WS, Li X, Zhang L, et al. Sparsity-based image denoising via dictionary learning and structural clustering. Proceedings of CVPR 2011. Colorado Springs, CO, USA. 2011. 457–464.

|

| [5] |

Yan M. Restoration of images corrupted by impulse noise and mixed Gaussian impulse noise using blind inpainting. arXiv: 1304.1408, 2013.

|

| [6] |

Huang T, Dong WS, Xie XM, et al. Mixed noise removal via Laplacian scale mixture modeling and nonlocal low-rank approximation. IEEE Transactions on Image Processing, 2017, 26(7): 3171-3186. DOI:10.1109/TIP.2017.2676466 |

| [7] |

Jia TT, Shi YY, Zhu YG, et al. An image restoration model combining mixed L1/L2 fidelity terms. Journal of Visual Communication and Image Representation, 2016, 38: 461-473. DOI:10.1016/j.jvcir.2016.03.022 |

| [8] |

Rudin LI, Osher S, Fatemi E. Nonlinear total variation based noise removal algorithms. Physica D, 1992, 60(1–4): 259-268. DOI:10.1016/0167-2789(92)90242-F |

| [9] |

Geman S, Geman D. Stochastic relaxation, Gibbs distributions, and the Bayesian restoration of images. IEEE Transactions on Pattern Analysis and Machine Intelligence, 1984, PAMI-6(6): 721-741. DOI:10.1109/TPAMI.1984.4767596 |

| [10] |

Esser E. Applications of Lagrangian-based alternating direction methods and connections to split Bregman. CAM Report 9, 2009. 31.

|

| [11] |

Geman D, Reynolds G. Constrained restoration and the recovery of discontinuities. IEEE Transactions on Pattern Analysis and Machine Intelligence, 1992, 14(3): 367-383. DOI:10.1109/34.120331 |

| [12] |

Geman D, Yang CD. Nonlinear image recovery with half-quadratic regularization. IEEE Transactions on Image Processing, 1995, 4(7): 932-946. DOI:10.1109/83.392335 |

| [13] |

Nikolova M. Analysis of the recovery of edges in images and signals by minimizing nonconvex regularized least-squares. Multiscale Modeling & Simulation, 2005, 4(3): 960-991. |

| [14] |

Aubert G, Kornprobst P. Mathematical Problems in Image Processing: Partial Differential Equations and the Calculus of Variations. 2nd ed. New York: Springer, 2006.

|

| [15] |

Zhang T. Analysis of multi-stage convex relaxation for sparse regularization. Journal of Machine Learning Research, 2010, 11: 1081-1107. |

| [16] |

Aubert G, Aujol JF. A variational approach to removing multiplicative noise. SIAM Journal on Applied Mathematics, 2008, 68(4): 925-946. DOI:10.1137/060671814 |

| [17] |

Chan TF, Esedoglu S. Aspects of total variation regularized L1 function approximation. SIAM Journal on Applied Mathematics, 2005, 65(5): 1817-1837. DOI:10.1137/040604297 |

| [18] |

Cai XH, Chan R, Zeng TY. A two-stage image segmentation method using a convex variant of the Mumford-Shah model and thresholding. SIAM Journal on Imaging Sciences, 2013, 6(1): 368-390. DOI:10.1137/120867068 |

| [19] |

Alliney S. A property of the minimum vectors of a regularizing functional defined by means of the absolute norm. IEEE Transactions on Signal Processing, 1997, 45(4): 913-917. DOI:10.1109/78.564179 |

| [20] |

Nikolova M. Minimizers of cost-functions involving nonsmooth data-fidelity terms. Application to the processing of outliers. SIAM Journal on Numerical Analysis, 2002, 40(3): 965-994. DOI:10.1137/S0036142901389165 |

| [21] |

Nikolova M. A variational approach to remove outliers and impulse noise. Journal of Mathematical Imaging and Vision, 2004, 50(1-2): 99-120. |

| [22] |

He BS, Liao LZ, Han DR, et al. A new inexact alternating directions method for monotone variational inequalities. Mathematical Programming, 2002, 92(1): 103-118. DOI:10.1007/s101070100280 |

| [23] |

Goldstein T, Osher S. The split Bregman method for L1-regularized problems. SIAM Journal on Imaging Sciences, 2009, 2(2): 323-343. DOI:10.1137/080725891 |

2018, Vol. 27

2018, Vol. 27